A self-driving car has been traveling down the highway. When a ball rolls onto the highway, followed by a young child, the vehicle must come to an immediate stop. The vehicle stops rapidly enough, but it has not had time to send a video of the incident to a server many miles away and receive further instructions. How was the vehicle able to react so quickly?

The need for immediate action in order for a smart robot to be effective is tied to the problem of transmitting data over the Internet quickly enough to allow for a reply from a remote location (robotic latency) — and for a delivery drone attempting to navigate around a bird, or a robotic surgical tool attempting to make an accurate cut — the margin of error can be a matter of life and death.

To support real-time decision making, a distributed model of robotic intelligence has been developed. This means that instead of having the robot’s brain in one place, there are brains in many places. The robot (device) is home to a relatively small amount of intelligence focused on very fast decisions and quick reactions; a larger amount of intelligence focused on longer-term decisions and learning is located in “the cloud.”

The ability to leverage this powerful partnership is the core concept behind Hybrid Cloud Robotics and will enable us to create autonomous vehicles, greatly improve the efficiency of automated warehouse systems, and create an entirely new class of intelligent technology.

Hybrid Cloud Edge Robotics: Cloud Intelligence Meets Real-Time Robotic Action

The use of hybrid cloud and edge computing has dramatically changed the way robots understand their world, avoid obstacles, find routes, and learn from it. Hybrid Cloud Edge Robotics is a solution for providing cloud-scale intelligence to your robots while maintaining real-time control. This will enable robots to react quickly to changing conditions and leverage the benefits of large models, big data, and learning across the entire fleet.

In simple terms, Hybrid Cloud Edge Robotics enables a robot’s “reflexes” (edge) to occur at the machine level and its “brain growth” (cloud) to occur using shared cloud resources. As robots move out of the lab into warehouse environments, hospital settings, city streets, and factory floors, this combination becomes increasingly critical, as seconds or milliseconds can make the difference.

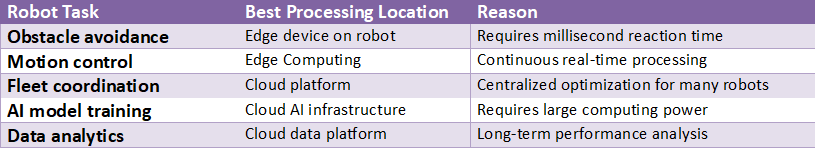

On the edge side of Hybrid Cloud Edge Robotics, time-sensitive functions such as sensor fusion, obstacle detection, motion control loop execution, safety stop implementation, and updating local perception models are handled. On the cloud side of Hybrid Cloud Edge Robotics, scalable functions such as model development via simulation, long-horizon planning, analytics, and policy optimization for the entire fleet are executed. By utilizing Hybrid Cloud Edge Robotics, organizations can manage the cost of processing their robot data and maintain system responsiveness even if cloud connectivity is interrupted.

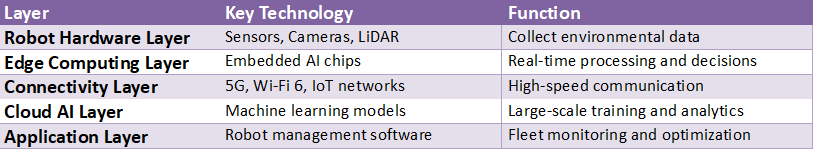

Usually, there are 3 layers to an architecture for hybrid cloud edge robotics (robot, edge node, and cloud). Each layer performs different functions: the robot includes cameras, lidars, encoders, and a processing module that enables it to control its movement immediately.

Usually, the edge node will be located either on a rack within a facility or at a 5G/MEC site. This is where all data from multiple robots is aggregated; cached models are also stored here, and low-latency services, such as mapping, coordination, and video analytics, are provided. On the other hand, the cloud provides a central location for managing devices and identities, data lakes, pipelines, etc., making hybrid cloud edge robotics much stronger; each layer should be able to use consistent APIs, logging, and model versioning to communicate with one another.

#Robot Fleet Management: A Smart Essential Guide in 5 Steps

On the other hand, Hybrid Cloud Edge Robotics derives its strength from the data flow. Sensor data volume is very large. It is therefore costly and time-consuming to send all sensor data to the cloud. Therefore, the edge pipeline filters and compresses only the required data, i.e., events, embeddings, anomalies, and short video clips around incidents.

In this way, Hybrid Cloud Edge Robotics allows you to keep your sensitive data local and generate valuable training data by de-identifying, aggregating, or using Federated Learning. Finally, the cloud enables better perception and decision-making, whereas the edge deploys these models securely by performing staged rollouts, canary robots, and immediate rollbacks.

Reliability for real-time actions is also essential. The Hybrid Cloud Edge Robotics solution will degrade safely and continue to allow robot operation even if network performance is poor. Robots will be able to offload computationally intensive tasks to remote servers, update routing information, and continuously synchronize their map data as soon as a reliable network connection is available. As network performance deteriorates, the robots will operate autonomously and avoid creating unsafe situations.

To accomplish this, Hybrid Cloud Edge Robotics will often implement a combination of local caching (to use the most recent model/policy), heartbeat monitoring (to verify communication), and “safe mode” functions that reduce robot speed or return to a safe operating state. Thus, it will be possible to maintain production levels while improving the robot’s capabilities once network connectivity is restored.

There are multiple examples of Hybrid Cloud Edge Robotics currently in use today. Examples include warehouse operations, where large fleets of mobile robots will navigate warehouse aisles and determine optimal routes based on local decision-making, while the cloud will analyze data to determine the best overall configuration for the fleet and improve warehouse throughput for each shift. Manufacturing applications will use edge computing for precise robotic arm control, while the cloud will analyze trend data on quality and update machine vision models across multiple manufacturing locations.

Service robots used in healthcare applications must make navigation decisions immediately while adhering to strict privacy controls for sensor data collected near patients. Hybrid Cloud Edge Robotics will enable these capabilities by leveraging all sensor data locally near patients while performing cloud-based analysis for predictive maintenance and planning.

To implement the security and governance of Hybrid Cloud Edge Robotics, they must be in place from the outset, not after development is complete. Since robotics can be classified as a Cyber-Physical System (CPS) there are potential consequences if a security breach occurs that could potentially cause physical injury. To avoid these types of breaches, Hybrid Cloud Edge Robotics will require strong device identities, end-to-end encryption, digitally signed model artifacts, and role-based access control as the minimum requirements for its security and governance.

Additionally, Hybrid Cloud Edge Robotics will require a history file to document who initiated the deployment of a specific model, when model parameters were changed, and why a robot made a certain decision. Other policy decisions to be addressed will include: what types of data can be removed from a site, how long data will be stored, and how model updates will be approved by management, especially for those operating in a regulated environment.

Hybrid Cloud Edge Robotics operational and monitoring represent the move from a prototype to a production environment. For teams to successfully make this transition, they must be able to view latency, battery life, motor temperature, sensor drift, and model performance. Hybrid Cloud Edge Robotics provides several features, such as fleet dashboards, over-the-air model updates, remote debugging, and automatic incident capture and failure analysis to support learning.

Hybrid Cloud Edge Robotics continues to leverage classical control methods, combined with the power of edge AI and the scale of cloud-based training. As we move forward, larger foundation models will be able to generalize better across robots; however, as these models are deployed on the limited resources available in edge computing environments, inference efficiency, model quantization, and specialized hardware accelerators will still be required.

We will also see additional enhancements to Hybrid Cloud Edge Robotics through synthetic data pipelines, next-generation simulators, and digital twins to support systems learning in a controlled environment before deployment to the physical world. The ultimate advantage of Hybrid Cloud Edge Robotics has always been this: the cloud will provide the intelligence needed for continued improvement while the edge will execute trusted actions immediately.

Hybrid Cloud Robotics Architecture Layers

Example: 5G-enabled robots can send data to the cloud 10-100x faster than traditional wireless networks.

Source: Ericsson 5G for Robotics Report

https://www.ericsson.com/en/reports-and-papers/industrylab/reports/5g-for-robotics

From Reflex to Reason: How Modern Robotics Thinks

The rapid response of a self-driving car can seem magical, yet now you can see it is truly a partnership. We are aware of the same concept within ourselves – our bodies have two types of responses to our environment. Our nervous system provides us with quick reflexive responses when we encounter an imminent threat. On the other hand, our cognitive abilities enable us to think strategically and plan for the future. Hybrid Cloud Robotics also operates on the idea of a collaborative effort, and therefore, you can now confidently express that hybrid cloud robotics operates based on the team effort that is at the center of collaboration.

Your next assignment is not to create a robot; however, you should start seeing all robots through this lens. When you see a delivery drone, a smart vacuum cleaner, or read about an automated checkout, try to identify the invisible “dance” that occurs. Ask yourself: What percent of the process was a quick reaction, and what percent of the process was a thought-out process? This will help you to identify the advantages of edge computing in action and make this knowledge your own.

You now have the capability to analyze the ever-changing technology landscape and view technology beyond the machine itself to understand the unseen processes that power its intelligence. In short, you see the technology, but you don’t understand how it works.

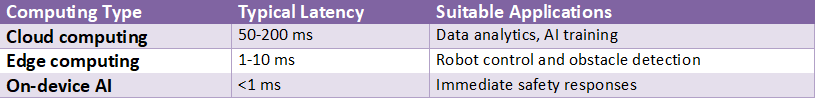

The Problem of Delay: Why Robots Can’t Just Use the Internet for Everything

Using cloud computing for your robot’s brain and something else to control it is beneficial because of the distance between your cloud and your robot. Although information travels at the speed of light, even sending a command to a server located in another country, or in another part of a big city, means there is going to be a delay (latency) before you get a response back from the server. This is an important consideration for self-driving vehicles. If a self-driving vehicle identifies a bicycle swerving into its path, a few milliseconds may be the difference between being able to avoid the bicycle and crashing into it.

Latency is the time it takes for a device to respond to a command from a remote server. Latency causes lag in video calling. A frozen video call is annoying, but latency issues in robots result in a complete loss of function. For example, if a delivery drone has to determine where to go to avoid pedestrians on a busy street and uses the cloud as its source of intelligence, it will crash into a pedestrian rather than safely avoid them due to cloud latency.

Based on this, it is apparent that using the cloud as the primary means of providing intelligence to the robot will never be enough. Cloud-based solutions are excellent for large-scale machine learning and data analytics; however, they do not provide low enough latency to meet real-world operational requirements. As such, a new architecture was developed, which included a high-speed nervous system (reflexes) to enable the robot to operate effectively in real-world environments.

#Virtual Robot Testing: Smart & Powerful Ways to Save Time and Money

The Solution for Speed: Giving Robots ‘Reflexes’ with Edge Computing

Engineers found a solution to this time delay problem. They understood that the robot could not wait for permission from a remote server. Therefore, they used edge computing to enable the robot to think for itself instantly.

The term Edge Computing is related to the speed of reaction in your own body. For example, when you place your hand on a hot stove, your hand will quickly remove it from the stove, long before you become aware that you have experienced a burning sensation. In the same way, Edge Computing provides a small (but capable) mini-brain that can make instantaneous decisions by being located at the “edge” of the network on the robot.

You probably already know about this tech. There are no smart vacuums sending video to the internet to decide if they need to turn around a chair leg; smart vacuums have sensors and turn in less than a second using their own information. Using its own information, the robot operates much faster and makes better decisions. The result is that what was once a slow, clumsy robot becomes a fast, agile assistant, able to navigate a cluttered environment quickly with little input from you.

A mini-computer is a great fit for making fast decisions, but it is not suited for large storage or for doing many large computations. These functions require your robot to access the “brain” in the cloud again.

Robotics Edge Computing: Low-Latency Computing for Intelligent Robots

Robotics edge computing is the process of placing critical robot workloads (robotics) as close to the robot as possible (on the robot, on premise, or on a local Edge Server). This type of robotics edge computing is used primarily for two reasons: to reduce latency in processing robot sensor data and to increase the reliability of remote robot operation. Using robotics edge computing allows a robot to respond quickly to people, objects, and other stimuli at millisecond levels, enabling it to better navigate around objects in its environment and interact with them more accurately.

#What Is SLAM? Smart Essential Guide to How Robots Know Their Location

One of the most important advantages of Robotics Edge Computing is its ability to enable real-time perception and control. In order to do that, a constant flow of information coming from cameras, Lidar, force sensors, and encoders needs to be immediately analyzed; therefore, Robotics Edge Computing is able to facilitate fast sensor fusion, object detection, localization, path planning, and the control loop cycle without waiting for communication with remote servers to complete.

Lower latency in the local pipeline will lead to improved safety and performance of robots (for example, faster stopping/avoidance behavior), improved efficiency, and reduced operational expenses.

Robotics Edge Computing can help reduce network bandwidth consumption and operational expenses by transmitting event detection, anomaly detection, summaries, and short data clips to the cloud, rather than sending full motion video or full point clouds. This will enable better scalability for fleets and compliance with all privacy regulations by storing sensitive data locally.

Additionally, Robotics Edge Computing will enable “Offline First” operation for mission-critical robotics applications, allowing robots to perform their tasks regardless of whether they are connected to the cloud and, upon reconnecting to the cloud, to sync log and metric data.

Many uses of robots will enable Robotics Edge Computing to run alongside Hybrid Cloud Edge Robotics. Time-sensitive functions are executed by Edge resources, while larger-scale workloads, such as large-scale model training, simulation, analytics, and fleet-wide software updates, are executed by the Cloud. Teams using Hybrid Cloud Edge Robotics can continuously push new model versions to a multitude of robots in real time. In addition, Hybrid Cloud Edge Robotics provides teams with a single point of governance (identity, access control, signed artifacts, audit trails), while providing individual robots with their own autonomy at the Edge.

When teams combine Hybrid Cloud Edge Robotics with Robotics Edge Computing, they can create a strong balance between quick, localized decision-making and scalable, Cloud-based intelligence. The result is a robot that can respond in real-time locally, continue to learn and adapt, and can be easily managed as more and more robots are deployed—just what today’s Autonomous Robotic Systems need.

Edge Computing Robotics: Real-Time Intelligence Where Robots Operate

Edge computing for robotics allows robots to perform local information processing — either on the robot itself, at a nearby gateway, or on an on-site edge computer — to make rapid decisions and respond quickly. With Edge Computing Robotics, perception, planning, and safe behavior do not need to wait for the robot’s information to reach a remote data center before executing. As an example, this would be especially important for a robot to identify a person approaching its path and then reduce the force it applies to a sensitive product, or to move quickly through a narrow aisle.

In addition to improving the timing and responsiveness of the robot’s actions, Edge Computing Robotics will enable workloads such as sensor fusion, object recognition, SLAM/localization, collision avoidance, and control-loop execution to run locally on the robot.

These types of workloads are both time-sensitive (i.e., real-time) and require large amounts of data (e.g., video and LiDAR), enabling Edge Computing Robotics to execute these applications locally on the robot, thereby reducing jitter and enabling smoother, more predictable motion. Additionally, Edge Computing Robotics improves the robot’s uptime: even with disruptions in Wi-Fi connectivity or bandwidth limitations, the robot can continue to function autonomously using local processing resources.

Edge Computing Robotics enables intelligent real-time data processing. Because the edge can filter, reduce, and compress raw sensor data before transmitting it to the cloud for final processing, the amount of data transmitted to the cloud is reduced, lowering cloud usage costs and supporting compliance with privacy regulations by performing image processing directly at the edge. The edge will continuously monitor model drift, identify anomalies (i.e., lighting changes, floor glare), and mark data for retraining as needed during its lifetime.

A number of groups are using Hybrid Cloud Edge Robotics to leverage the strengths of each platform—rapid execution at the edge and scalable intelligence in the cloud. Hybrid Cloud Edge Robotics combines the cloud’s capabilities for heavy training, simulation, fleet analytics, and centralized management with the edge’s ability to enable instant decision-making. Additionally, Hybrid Cloud Edge Robotics enables control of model rollouts: test an update on a few robots first to assess performance, then rapidly revert to the prior model version(s) if problems arise.

When implemented at scale with many robots, Edge Computing Robotics can create a coordination advantage. At each local edge node in an installation, nodes may coordinate traffic flow, exchange information about the area map, and distribute workloads among the robots present in that facility. Furthermore, Hybrid Cloud Edge Robotics provides a unified mechanism for logging, policy enforcement, and security services across all locations. If properly implemented, Hybrid Cloud Edge Robotics, combined with Edge Computing Robotics, enables robots to learn and continually improve their responses, providing real-time intelligence at the point of operation.

Edge AI Robotics: AI Decisions in Real Time

Edge AI Robotics is the operation of an AI model directly on a robot’s sensors and actuators, allowing the robot to make decisions at millisecond speeds rather than seconds. With the capability to “see” people, objects, and distances from sensor input and react accordingly to create smooth motion and to ensure safe navigation, and effective collaboration with humans, Edge AI Robotics significantly lowers the need to send raw video and/or LiDAR data to the cloud, reducing bandwidth costs and ensuring that sensitive information remains on-site.

Real-world examples of Edge AI Robotics include supporting perception (vision & depth) and localization/mapping functions, as well as anomaly detection and on-device quality checks. Because the AI model resides on the local device, an application utilizing Edge AI Robotics continues to function properly even when the WiFi connection is unreliable or, due to location limitations, there is little to no internet connectivity. This reason alone is why companies such as factories, warehouses, and hospitals are embracing Edge AI Robotics to meet their day-to-day automation needs.

Hybrid Cloud Edge Robotics is still relevant as robotics continues to evolve. The Hybrid Cloud Edge Robotics setup offers the speed of the edge for latency-critical inference and control, and the scalability and capabilities of the cloud for training large models, running simulations, and fleet management.

The Hybrid Cloud Edge Robotics deployment will have the edge execute all latency-critical inference and control; the cloud will handle larger model training, run simulations, and analyze performance across multiple robots and sites. With Hybrid Cloud Edge Robotics, you can do software updates efficiently, monitor model drift, and compare results from different environments without disrupting your real-time operation.

To implement an appropriate model, Edge AI Robotics can process data locally at the time of collection and then send only a compressed (filtered and summarized) version of the data to the cloud as a single signal (events, embeddings, failures, or a short video clip). Once this data is received by the Hybrid Cloud Edge Robotics environment, it may be used for model retraining, performance assessment, and delivery of signed model packages to the edge devices for deployment.

By implementing a well-planned roll-out strategy, Hybrid Cloud Edge Robotics will begin by evaluating changes on a small number of robot units, monitoring safety-related metrics, and reverting to previous model versions immediately upon detecting a regression.

Security and Safety will also be very important considerations in both systems. Edge AI Robotics offers benefits for enforcing policies on the local unit and quickly recovering from failure, while Hybrid Cloud Edge Robotics offers centralized identity management, audit logs, and controlled access to models and telemetry. In conclusion, when combined, Edge AI Robotics and Hybrid Cloud Edge Robotics create a robust robotic platform that supports local autonomy, continuous learning in the cloud, and reliable operation of multiple robots. This illustrates how the current generation of robotics translates AI into trusted, timely actions.

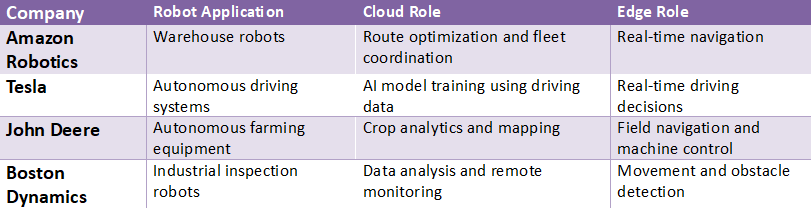

Real-World Hybrid Cloud-Edge Robotics Deployments

Source: McKinsey Robotics and Automation Insights

https://www.mckinsey.com/industries/advanced-electronics/our-insights/the-future-of-robotics

The ‘Big Brain’ in the Sky: What the Cloud Is Really For

Most people think of the cloud as a repository for storing their images and documents. To a robot, however, the cloud is an extremely sophisticated means of learning, much like a brain. Your initial reaction to pull your hand away from the hot stove (edge) is processed through your brain (cloud). This process creates memory and learning for future reference.

Similarly, the cloud performs the bulk of the decision-making and long-term memory processes for a robot. It is here that the true power exists. The cloud does not just analyze data for individual robots; it can also combine the data from many different robots within a fleet to create collective knowledge. If a single delivery drone struggles with navigation around a newly constructed area, the system collects the data, analyzes it, and transmits a software update to all other cloud-connected robots to instantly provide each robot the best course of action. In this manner, one robot learns something, and all robots learn from that experience.

A robot’s edge computer makes the quick “avoid hitting that wall” type decisions, while the cloud makes the “how do we enable all of our robots to be smarter tomorrow?” type decisions. Because of limited processing capability, a robot’s local brain cannot perform the same level of comprehensive analysis as the cloud can. The cloud offers the tremendous computational abilities necessary for the robot to learn over time. As such, a robot doesn’t need to decide whether to rely solely on its own reflexes and a remote brain; instead, it is designed to utilize both in a symbiotic relationship.

Hybrid Cloud Solutions: Flexible Cloud Power, Smarter Control

Hybrid Cloud Solutions are created by combining the best features of both On-Premises (infrastructure) and Public Cloud. This allows applications to scale elastically as demand increases, provides control in areas such as Security and Regulatory Compliance as needed, and creates a much easier migration path for modernizing Legacy Applications.

Hybrid Cloud Solutions provide the organization with the flexibility to place each application in the environment most beneficial to it, i.e., Sensitive Workloads (latency-sensitive workloads) operating closer to Users/Operations and taking advantage of the cloud for Burst Capacity, Advanced Analytics, and Rapid Prototyping. Thus, providing the organization with protection from being locked into “All or Nothing” migrations that can create Business Disruption.

A major benefit of Hybrid Cloud Solutions from an operational standpoint is improved operational control. Through centralized policy management, an organization can manage Identity, Access, Encryption, and Compliance across all environments, while still maintaining local systems to support Performance and Continuity.

Additionally, Hybrid Cloud Solutions enables the creation of Resilient Architectures: In the event that an organization has limited connectivity, Critical Services can run locally and then synchronize data and logs to the cloud once connectivity is available again. Organizations have found that this hybrid model fits within their budgets and is operationally better than either a Purely Cloud model or a Purely On-Premises model.

Hybrid Cloud Edge Robotics demonstrates in robotics and automation why hybrid models are so important. The robot needs to respond immediately to its perception, safety stop, and motion control commands – all of which have to run at the edge. At the same time, Hybrid Cloud Edge Robotics uses cloud-scale processing to train, simulate, and analyze fleets of robots, thus continually improving its models.

Hybrid Cloud Solutions enable real-time inference to run close to the robot while using the cloud to retrain vision models, leverage a digital twin, and coordinate updates across multiple sites. This ability to maintain low latency while continuously learning is what makes Hybrid Cloud Edge Robotics possible.

In addition to low-latency, Hybrid Cloud Solutions make rollout security easier. Hybrid Cloud Edge Robotics enables staging updates, testing them on a subset of devices, monitoring for regressions, and rolling them back quickly. Data can be filtered at the edge to preserve privacy and limit bandwidth while the cloud aggregates insights to improve the overall system’s performance – another advantage of Hybrid Cloud Edge Robotics.

Cloud-based solutions enable scalability and flexibility, as well as centralized intelligent control (which Hybrid Cloud Solutions offer, particularly in systems that need to support both fast response times and large-scale, scalable intelligence, such as Hybrid Cloud Edge Robotics).

Cloud Edge Solutions delivers both high performance from distributed functions and the cost savings that come with centralization using cloud services. The system has an architecture in which low-latency, real-time processing occurs at the “edge” of the network (robots, cameras, sensors, and machines), while higher-bandwidth applications (analytics, model training, and large-scale storage) are hosted in the cloud. This enables reduced latency and improved reliability while ensuring system-wide stability regardless of cloud connection quality.

The biggest advantages of Cloud Edge Solutions include enabling real-time decision-making. No longer will you need to send all raw video, telemetry, and/or LiDAR streams to a distant location and wait for the data to be processed and returned; rather, the edge node can perform inference, filter data, and/or trigger action on events and/or conditions happening at the edge. Additionally, Cloud Edge Solutions offers lower bandwidth costs because you transmit only what is required (events, anomalies, summary data, etc.) and/or compressed versions of the original data.

Companies that require data confidentiality and control can utilize Cloud Edge Solutions to maintain their data locally and still receive enterprise-level governance, dashboards and/or insights into their entire fleet through the centralized services offered by the cloud.

Hybrid Cloud Edge Robotics is an excellent case study of the application of hybrid cloud-edge computing paradigms in robotics. While edge execution provides the millisecond-level latency required for safety stops, navigation, and motion control in robots,

Hybrid Cloud Edge Robotics also has an equally important requirement for cloud-scale learning: training perception models, running simulations, and comparing fleet-wide behavior across different sites. It is Cloud Edge Solutions that provides the “glue” to make it easier to deploy consistent services across all remote sites and to update services remotely without impacting real-time operations. As such, Hybrid Cloud Edge Robotics typically uses its edge nodes as caches and/or coordinators and/or the cloud as an orchestrator and for continuous improvement.

From an operational perspective, Cloud Edge Solutions will allow for safer and/or more resilient (i.e., less prone to faults) deployments. A Hybrid Cloud Edge Robotics environment allows for staging of model upgrades; monitoring of key performance indicators (KPIs); and rapid rollback if KPIs decrease. Moreover, if communication with the cloud fails, the edge may still execute critical functions until synchronization resumes—a highly attractive characteristic in high-traffic Hybrid Cloud Edge Robotics environments.

Standardizing observability across the edge and cloud, implementing secure methods to identify devices and/or sign artifacts, and establishing clear policies on where specific functions are executed are all necessary steps to effectively implement Cloud Edge Solutions. When implemented correctly, Cloud Edge Solutions will provide cloud-scale capabilities while delivering edge-like latency — precisely what Hybrid Cloud Edge Robotics needs to operate responsively, securely, and continuously improve.

Cloud vs Edge Response Time Comparison

Example: Industrial robots in smart factories require a latency below 10 milliseconds for safe automation.

Source: Intel Edge Computing Research

https://www.intel.com/content/www/us/en/edge-computing/what-is-edge-computing.html

The Dream Team: How Hybrid Cloud and Edge Work Together

The fastest local thinking on a robot (the edge) supports the robot’s fast movements, while the most intelligent remote thinking on a robot (the cloud) enables the robot to think deeply. Hybrid cloud-edge robotics represents an ideal combination of the “fastest” (edge) and “smartest” (cloud) thinking systems; the robot can be both faster and smarter.

The simplest way to understand the relationship of the edge and cloud is to think of your own body as a reference point. Your body’s reflexes represent your edge computing. When you trip over a curb, your arms will swing out to catch yourself and prevent injury before you realize what happened. That represents your local nervous system quickly responding to help you avoid injury. Once you are stable, your brain (your cloud) has time to analyze what occurred and create a memory so you don’t trip over this curb again.

This strong connection creates a never-ending process of learning. The Edge Computer on your robot is constantly making thousands of quick decisions; however, if an unusual event happens, like a new obstruction or obstacle in the path of the robot, the robot will identify the issue, send that data to the cloud for evaluation, and the Cloud Brain will analyze the information to determine the most effective method to address the new obstacle. Once the decision is determined, the Cloud Brain will send it to the Robot. This hybrid cloud design enables each individual robot to continually get smarter on its own.

In the end, the hybrid design allows robots to be more than just pre-programmed machines. They can respond quickly to their environment as part of a Dynamic System and increase their intelligence over time. Each robot can learn from its own experience and use that knowledge to make the entire Fleet safer and more efficient.

Decision Location in Hybrid Cloud-Edge Robotics

Example: Autonomous delivery robots process obstacle detection locally, but route optimization happens in the cloud.

Source: IBM Edge Computing for Robotics

https://www.ibm.com/topics/edge-computing

Three Places You’ll See Hybrid Robotics in Action Today

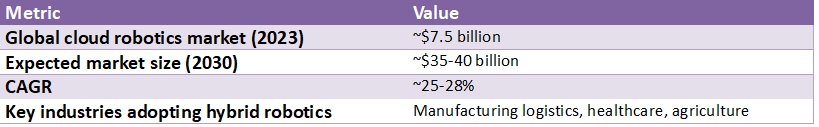

The Edge-Cloud partnership allows the Edge to process in real time, leveraging its vast resources to create new consumer experiences, and the Cloud to provide new experiences such as cashierless stores, autonomous delivery drones, and automated farming.

This partnership is currently utilized by multiple organizations to assist the public, and there are many examples of how it has assisted them:

- Stores like Amazon Go use a combination of cameras and sensors to track a customer as they shop. Then, when a customer selects an item to buy, the cameras and sensors at the edge or in the store send a fast stream of data to the cloud for processing. After the data is processed in the cloud, it can charge the customer’s account, update the inventory levels of the selected item(s), and analyze shopping patterns for all customers who have shopped at the store.

- Robots are also being used to improve crop yields by using their onboard cameras to identify weeds. Each robot is equipped with its own onboard camera and uses machine learning algorithms running on the device’s edge to quickly determine if something is a weed. Once the robot detects a weed, it sprays it. However, once the robot completes each pass through the field, it sends data to the cloud about the number of weeds sprayed per acre, and the cloud analyzes it to create a strategy for the next day that maximizes crop yield.

- Delivery drones are another example of how edge-cloud partnerships are creating a new user experience. Delivery drones’ primary purpose is to deliver packages safely; therefore, the edge processor focuses on quick responses to challenges such as avoiding birds and adjusting to changing wind directions. The cloud manages routes for the entire drone fleet to ensure timely delivery and minimize congestion in the air.

Ultimately, separating the immediate, simple tasks from the complex ones in an edge-based (or local) computing environment, and distinguishing them from those in a cloud-based computing environment, allows both the edge and the cloud to operate securely and efficiently.

Global Edge Robotics and Cloud Robotics Market Statistics

Source: MarketsandMarket Cloud Robotics Report

https://www.marketsandmarkets.com/Market-Reports/cloud-robotics-market-944.html

What Happens When the Connection Gets Faster? The Role of 5G

Edge will have a robot’s immediate reaction and need to “communicate” with the cloud so that the robot can “learn”, obtain “updates”, and “coordinate” with other robots. Edge could be compared to your Internet on a slow day, when you’re in a video conference and miss half the conversation due to a “choppy” video feed. When a team of robots works together, a “communication delay” (or “latency”) can cause each robot to take longer to operate, thereby reducing the amount of work the group can perform as a whole.

5G could improve this situation by providing an enhanced “highway” to connect the “edge” and “cloud”. 5G would enable the robot to rapidly communicate with the cloud while retaining both the robot’s rapid “reflexes” and the robot’s long-term “memory.” In addition to being extremely fast, the 5G “connection” would also provide a reliable means of communication. As a result, the robot would be able to rapidly transmit large amounts of data (such as HD video) and rapidly process and receive complex instructions from the cloud.

The time required to “connect” the two networks would also be greatly reduced. What types of “fast connections” would be available with 5G?

For example, consider a group of search-and-rescue robots searching through a collapsed building following an earthquake. With 5G, the robots could rapidly stream their live video feeds to a single central “brain” located in the cloud. The cloud could then rapidly combine the individual video feeds into a single, live 3-D view of the entire disaster area, identify people who may be alive, and direct the robots as a unified team. Because high-speed connectivity is required to achieve this type of “coordinated effort,” it simply cannot occur over a slower-speed network.