The likelihood is you’ve already used AI somewhere today; perhaps, at the very least, you saw a recommendation from Netflix on something to watch, AI removed spam from your email, or AI was involved when you called the business for support via their ChatBot. Today, AI is no longer just Science Fiction, but a regular part of our daily lives, guiding us and influencing the products we see and buy, the things we read, and the options available to us.

Because of the rapid pace of AI advancements, the Federal Government has established its first-ever formal Rulebook governing AI use. In addition to creating rules governing employment (i.e., hiring) and lending (i.e., loan approvals) as AI becomes increasingly adept at making decisions in these areas, the Federal Government is quickly developing Guardrails to help ensure that the benefits of AI are realized while protecting citizens from potential risks.

It can be difficult to keep up with developments in new AI-related policies and the recent news on the same. You might have heard of an “AI Bill of Rights” and possibly wondered if it affects you or your family. This Guide provides straightforward and direct answers to any questions you have regarding the White House Plan to Protect you, explains how it will happen, and discusses what it will mean to your Privacy and protections afforded under the Basic Laws governing the Protection of Your Rights.

#Complete Guide: Success & Powerful Best AI Tools for Business in USA

Summary

“Breaking Progressive AI Regulation News in the United States,” discusses the United States’ development of an overall regulatory environment for artificial intelligence (AI), via a combination of executive action/policy at the White House level, legislative/executive agency action, and state law. It outlines the main components of the White House’s Artificial Intelligence Executive Order, which defines Safety, Individual Rights Protections, and Continued Leadership in AI Innovation as the primary objectives.

The United States chose to implement a regulatory strategy focused on high-risk AI applications rather than applying similar regulations across all types of AI. High-risk AI applications include those used in critical infrastructure and/or health care, where higher standards of safety testing and security have been established before they are made available to the general public. In addition to implementing measures to regulate AI and prevent harms associated with deepfakes, the U.S. government is working toward establishing requirements that AI-generated content be labeled or watermarked to inform consumers about what is real and what is not online.

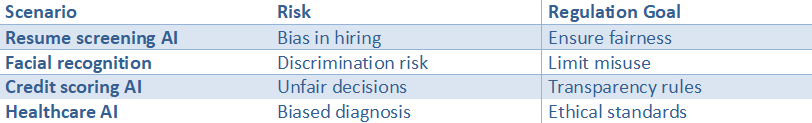

A second priority in the U.S. government’s AI focus is civil rights. The government has expressed its commitment to ensuring that anti-discrimination laws will apply regardless of whether an algorithm or a human makes the decision. Therefore, if a decision is made by an algorithm, there is still an obligation under the law to eliminate discrimination. As such, the government is encouraging the elimination of bias in AI decision-making processes (e.g., hiring, lending, insurance) and advocating for “explainable” AI. Explainable AI would allow for greater understanding and transparency into why certain decisions were made in automated decision-making processes.

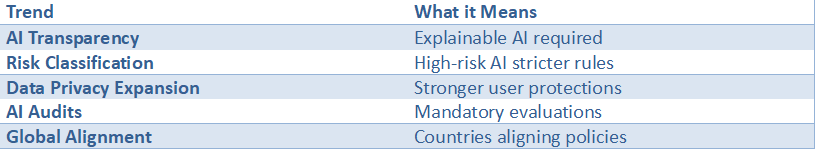

Unlike the EU’s AI Act, which regulates AI based on a single-tiered, risk-based model, the United States’ regulatory approach to AI is being implemented by building on existing regulatory and oversight mechanisms employed by various federal agencies. Some of the major trends to monitor moving forward will be increased use of AI labels, increased transparency, and possibly future federal legislation related to AI.

AI Regulation News in the United States: Stay updated on the latest developments shaping AI regulation and policy across the United States

The speed with which is changing in AI Regulation News in the United States is significant. Therefore, it is essential that all parties who develop, purchase, or utilize AI remain current regarding these changes. Policymakers (at both the Federal and State levels) are currently seeking ways to support innovation while preventing the negative consequences of AI system development. These potential negative consequences include unfairness in the use of hiring tools, “bias,” interfering with elections via the use of “deep fakes,” and unclear methods of making decisions by lenders, landlords, and health care providers through automated means.

Another major theme in AI Regulation news in the United States is the expanding roles of well-established regulatory organizations. Instead of waiting for Congress to pass an overarching “AI Law”, many regulatory agencies will apply existing law and regulation to how AI systems are being used — particularly if there is concern regarding protecting consumers, protecting Civil Rights, and/or protecting Personal Data from unauthorized access.

Therefore, companies can expect to face enforcement action against them based on how their AI systems work (in practice), as opposed to simply how their AI systems were marketed. Therefore, establishing robust documentation, testing, and governance processes will become much more critical to ensure that the AI models are accurate.

At the same time, AI regulation news in the United States reflects a growing shift toward state-level action. With respect to many of these topics — transparency, biometric privacy, use of automated decision-making, and disclosure about media created using AI systems — the states are moving forward and adopting laws. This varied approach by state presents some possible obstacles for companies with a presence in more than one state, who need to develop compliant strategies to navigate among the various conduct standards, or that otherwise limit their ability to introduce innovative products to market.

There is another emerging trend in AI regulation news in the United States towards greater clarity on which entities will be held responsible for the risks posed by the use of AI. Regulators and policymakers are requiring risk assessments, human oversight and review (i.e., audit), and post-incident reporting, particularly from those organizations applying AI tools in “high-risk” areas (e.g., applicant screenings; student evaluations; medical assistance; operation of critical infrastructure; government).

Although consistency in regulations has not been achieved, clarity regarding expectations for organizations exists: know your data sources; determine whether the AI system you use could create disproportionate impacts based on protected class; protect your sensitive data appropriately; and prepare to explain the outputs provided by the AI system.

There has been an increase in AI regulation news in the United States, covering supply chain issues. As such, organizations are being asked to understand how the tools they use operate, as well as their vendor(s), the data set(s) used to develop their tool, and the third-party model(s) used to develop their tool. These areas (terms of contract, evaluation right(s), and security control) are rapidly becoming standard requirements within procurement for all tools and software.

To be aware of AI Regulation News in the United States, it is important to track legislative activity, agency regulations/guidelines, and enforcement activities. Then you will need to take what has occurred and translate that into workable procedures: 1. Identify your AI usage scenarios; 2. Categorize the risk associated with your use of AI; 3. Document the decisions made during the selection process for your AI solution; 4. Establish recurring check-in/evaluation time frames to assess the effectiveness of your selected AI solution(s).

Overall, AI Regulation News in the United States indicates a clear trend for the future of AI: safer, more transparent, with defined lines of accountability from creation through operation.

#Complete Guide: Leading & Innovative Best Robotics Companies in USA

What Is the White House’s Big New “Game Plan” for AI?

The White House has released a brand-new plan for artificial intelligence (AI). It is not a new law passed by Congress, but rather an Executive Order. Think of it as the President issuing a very detailed instructional guide for all federal agencies — such as the Department of Commerce or the Department of Energy — on AI. This order serves as a blueprint for potential regulations, defining the government’s formal priorities for overseeing this rapidly advancing technology and laying the groundwork for U.S. AI policy.

At the heart of this new initiative are three primary objectives. First, it emphasizes safety and security; it requires developers of the most powerful AI systems to demonstrate that their models are secure before making them publicly available.

Second, it seeks to protect American citizens’ rights by focusing on minimizing AI-based discriminatory practices in hiring and privacy infringement by AI. Thirdly, the order seeks to maintain the United States’ position as a world leader in AI innovation; it will promote investment in AI and attract top talent from around the globe. These are the guiding principles of responsible AI governance that the government will adhere to.

The order is a starting gun, not a finishing gun. Each government agency now has a “to-do” list with deadlines to translate the broad goals of the order into specific, enforceable rules. This represents the first step in a long journey to understand the White House AI executive order and to shape how AI affects us; perhaps the next step will be to address the most critical risks associated with AI technology.

Regulation of AI in the United States: Explore how the regulation of AI in the United States is shaping responsible innovation and industry standards.

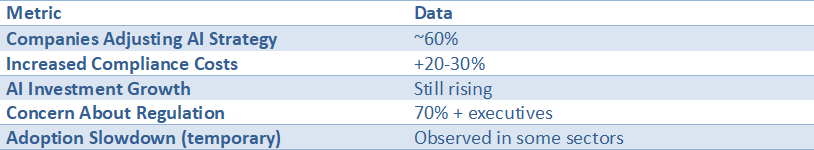

In the last few years, U.S. regulation of AI has been changing rapidly. In terms of its influence on how businesses build, test, and deploy AI responsibly, it is one of today’s most powerful forces. AI Regulation News in the United States reports on new regulations, guidelines, enforcement actions, and other developments related to AI, and businesses are finding that “move fast” can no longer stand alone; it must be accompanied by a range of controls, documentation, and accountability measures.

The Regulation of AI in the United States is doing more than reducing the potential risks of AI; it is establishing the industry norms that serious builders already use as the standard for their own work.

One of the primary ways in which the Regulation of AI in the United States is carried out is through the application of existing laws and regulatory frameworks: consumer protection law, civil rights law, privacy law, cybersecurity law, and sector-specific regulations in areas such as banking and healthcare.

Much of this effort is covered in AI Regulation News in the United States, which challenges teams to evaluate real-world impact issues, including but not limited to bias, unsafe outputs, deception, and poor data handling. Therefore, the Regulation of AI in the United States encourages practical control mechanisms, including, but not limited to, model evaluation, red teaming, human oversight, and a clear escalation path when problems arise.

Another key factor in AI regulation News in the United States is state activity. While some states have taken action in several areas (for example, biometrics, automated decision-making, and transparency requirements), the resulting patchwork will affect many nationally distributed products. This patchwork of regulations has led AI Regulation News in the United States to advocate for developing a flexible compliance plan that enables tailored solutions for use cases and risk levels, rather than relying on a single checklist.

A result of AI Regulation News in the United States’ advocacy for flexible compliance plans, the regulation of AI in the United States is leading to a greater amount of standardization in internal governance structures through processes such as an inventory of all AI systems, classification of those systems based on their potential impacts, and maintenance of records to explain how decisions were made relative to each system.

In addition to standardizing internal governance structures, AI Regulation News in the United States is positively influencing procurement and vendor management. As AI Regulation News in the United States reports increasingly high expectations for accountability among vendors, there are more rigorous inquiries into the quality of training data used to train AI systems, vendor-provided security measures and protocols, the ability of vendors to provide audit trails of their AI systems, and the limitations on the performance of the AI systems being procured.

These factors are driving the market toward clearer contract language, increased monitoring, and shared responsibility among all parties in the AI supply chain. As a whole, the AI regulation news in the United States is promoting responsible innovation by incentivizing vendors to be transparent, measure and reduce the risks of their systems, and demonstrate credible oversight. By keeping current with AI Regulation News in the United States, teams can better predict future regulatory changes and ensure that their product development, legal, and ethics work is aligned early before regulators, customers, or incidents require changes.

US AI laws: Understand the latest US AI laws influencing technology development and compliance requirements

US AI Laws are evolving through the combined actions of federal agencies, state legislatures, industry-specific guidelines and standards, and are also starting to influence the design and use of new technologies. Companies that use US AI Laws can track developments in AI regulation in the United States and identify which areas are likely to see stricter rules, particularly those involving higher-risk applications (e.g., hiring, lending, healthcare, education, government services, biometric identification).

US AI Laws news has forced many companies to move out of the experimental stage and begin compliance processes that can be reused on future projects.

Additionally, one of the key aspects of US AI Laws is that most of the obligations associated with the US AI Laws fall under general law governing business practices, not specific laws governing artificial intelligence. As such, when tracking AI regulation news in the United States, most regulatory bodies focus on how an AI system affects its users, including whether it misleads consumers, discriminates based on race, gender, age, etc., invades individuals’ privacy rights, or fails to protect user data adequately.

The requirements for US AI Laws may therefore require that organizations consider product development (the kind of data to collect, etc.) as well as the types of safeguards needed to protect against bias in the algorithms used in an AI system, the kinds of disclosures that should be made to users about an AI system’s use of a given set of data, and processes for handling user complaints or appeals for a human review of adverse effects resulting from decisions made by an AI system.

As noted previously, State action drives most other US AI Laws. State priorities represent different views on which risks should receive priority (e.g., Election Integrity/Deep Fakes, Biometric Privacy, Protection of Children, or Greater Transparency Regarding Automated Decision-Making). The patchwork nature of these laws creates barriers to rolling out such systems on a national scale, as discussed in AI Regulation News in the United States.

Moreover, US AI Laws are influencing business-wide (industry) standards and customer (buyers’) expectations. As AI Regulation News in the United States continues to report on new legislation and guidelines concerning AI, procurement teams have become accustomed to regularly requesting from their vendors proof of testing, documentation, and/or monitoring of their vendor’s AI as required by US AI Laws and for their customers.

In response to this trend, numerous procurement teams have developed working models of practical governance; maintained a catalog or inventory of all AI; identified and documented all systems based on identified risks; established methods for training and evaluating AI-based decision-making processes; and will continue to monitor the performance of AI-based systems for performance drift, safety concerns, and potential misuses.

The expanding expectations of transparency under US AI Laws have also created significant pressures to identify, tag, or “label” AI-generated products/content; explain how automated decisions were made using simple/plain language; and show that someone responsible can intervene if/when errors occur. Thus, staying informed about AI Regulation News in the United States is not merely a requirement for compliance; it is a way to begin creating scalable AI at the necessary levels of trust.

#Complete Guide: Leading & Revolutionary Top Quantum Computing Companies in USA

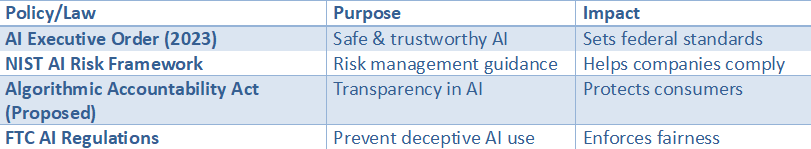

Key US AI Policies & Laws

Insight: The US uses a multi-agency approach rather than a single AI law.

Source:

- White House AI Executive Order

https://www.whitehouse.gov - NIST AI Framework

https://www.nist.gov

AI policy in the USA: Discover how AI policy in the USA guides ethical use, safety, and transparency in artificial intelligence systems

AI Policy in the USA uses a strategy similar to that of the rest of the world to develop and deploy Artificial Intelligence while ensuring ethical guidelines, safe use, and transparent standards. While the United States currently does not have an individual law or statute for the use of artificial intelligence within its borders, it develops AI policy through a variety of methods, including Executive Orders, Agency Guidelines, Industry Standards Work, and State initiatives to provide “responsible AI” in order to produce real-world products.

Through tracking AI regulation news in the United States, teams can determine which regulations/expectations have been codified into enforceable rules, such as Procurement Rules, investigations, and compliance programs.

The goal of protecting individuals from unintended harm – harmful discrimination, invasion of personal privacy, dangerous automatic decisions, and misleading AI-generated content – is a primary objective of AI Policy in the USA. As seen through AI Regulation News in the United States, regulators focus on whether the system works. More specifically, do the systems have bias when used? Are claims about accuracy misleading? Is sensitive information being processed in a secure manner?

Therefore, there are several practical applications of AI Policy in the USA for developers. Identify all of your data sources. Develop tests to measure bias and effectiveness across multiple demographics. Establish clear lines of accountability for approvals and changes.

The AI Policy in the USA for Regulating AI has identified three areas that will drive AI regulation. The first area is centered on “transparency” so that users and those impacted by an AI system can see where and when it was deployed, why it was deployed, and what it is doing.

Transparency can be accomplished via several means. For example, all content generated by an AI system should be clearly labeled as AI-generated; automated systems making decisions for individuals should provide a clear explanation of the decision(s) made; and a method should be established to allow humans to review/validate the results of high-impact decisions.

The second area of AI regulation News in the United States relates to “high-risk” applications (i.e., employment, lending, housing, healthcare support, education, and government services). Because these types of applications can directly influence many aspects of an individual’s life, they are typically subject to increased scrutiny and regulatory oversight regarding transparency and accountability.

AI Policy in the USA is also shaping the development of industry-wide AI governance practices. Organizations are establishing and utilizing various governance mechanisms, including (but not limited to): AI inventories; Risk Tiers; Pre-deployment Evaluation Tools; Red-Teaming Exercises; Incident Response Plans; Drift Monitoring; Misuse Monitoring, etc.

The AI Policy in the USA for Regulating AI has identified three areas that will drive AI regulation. The first area is centered on “transparency” so that users and those impacted by an AI system can see where and when it was deployed, why it was deployed, and what it is doing.

Transparency can be accomplished via several means. For example, all content generated by an AI system should be clearly labeled as AI-generated; automated systems making decisions for individuals should provide a clear explanation of the decision(s) made; and a method should be established to allow humans to review/validate the results of high-impact decisions.

The second area of AI regulation News in the United States relates to “high-risk” applications (i.e., employment, lending, housing, healthcare support, education, and government services). Because these types of applications can directly influence many aspects of an individual’s life, they are typically subject to increased scrutiny and regulatory oversight regarding transparency and accountability.

AI Policy in the USA is also shaping the development of industry-wide AI governance practices. Organizations are establishing and utilizing various governance mechanisms, including (but not limited to): AI inventories; Risk Tiers; Pre-deployment Evaluation Tools; Red-Teaming Exercises; Incident Response Plans; Drift Monitoring; Misuse Monitoring, etc.

AI governance in the USA: Learn how AI governance in the USA ensures accountability and oversight in emerging technologies

AI Governance in the USA: As organizations across the U.S. continue to roll out AI into areas that involve a greater amount of risk (e.g. Hiring/Lending; Healthcare Support; Customer Service/Marketing; Public-Sector Workflows) organizations are beginning to feel pressure to implement AI governance programs that will provide both accountability of AI systems — so there is a person accountable for the decision(s) made by the AI system; assess risk prior to implementing an AI system; identify issues with an AI system quickly and correct them promptly.

Teams that follow AI regulation news in the United States help keep their AI governance practices aligned with regulatory bodies, buyers, and the public’s increasing expectations.

Visibility is key to developing a successful AI governance in the USA. First, organizations must develop an inventory of all AI systems being utilized by the organization, what data is being input into these systems, who developed the systems (vendors), and why each system was purchased. The majority of recent AI Regulation News in the United States, states that organizations’ unknown AI usage creates compliance gaps, particularly when AI systems make automated decisions about individuals.

In addition, AI governance in the USA establishes formal oversight mechanisms to evaluate and validate AI systems before and after they go live. These commonalities include: pre-deployment validation and evaluation of AI systems for potential biases; ongoing assessment and analysis of AI systems’ robustness and their ability to resist manipulation; red teaming and other forms of adversarial testing and validation; security reviews and audits of AI systems to identify vulnerabilities and weaknesses; and the creation of transparent, accessible documentation of an AI system’s capabilities, limitations and potential risks.

As described in recent articles from AI Regulation News in the United States, regulatory bodies are increasingly focused on establishing standards related to the generation and use of evidence. Specifically: How was your AI tested? What were the results of those tests? What adjustments did you make as a result of those tests?

AI Governance in the USA translates these expectations into procedural requirements to ensure regulatory compliance.

In addition to compliance and evidence, the remaining three components of AI Governance in the USA are Accountability, Transparency, and User Trust.

Accountability refers to defining roles within an organization responsible for approving the deployment of an AI system; monitoring its performance; providing support to users if an issue arises with an AI system; and developing protocols for escalating incidents involving an AI system. It is also necessary to define audit processes to track historical usage of an AI system, and create validatable models for tracking changes made to an AI system during its lifecycle.

Additionally, as noted by various publications, including AI Regulation News in the United States, organizations should recognize that while ensuring compliance with regulations upon completion of an initial project is essential, it is equally important to establish methods to continuously monitor an AI system’s performance and behavior throughout its operational lifecycle. This is critical because many AI systems exhibit changing behaviors over time, and new ways an AI system can be exploited may not yet have been identified.

The last two of the four elements of AI Governance in the U.S.A. are user trust and transparency. Transparency means telling users, when they interact with an AI System, that an AI System is being used during their interaction. User Trust is based upon how well an organization explains to a user why an automated result was reached. In addition, the avenue for user trust has two primary forms: providing a way for users to have a human review of an automated decision and allowing users to appeal an automated decision.

Based on the report from “AI Regulation News in the United States,” we see a growing expectation for each of these three elements of AI Governance in the USA, especially within industries considered high risk or sensitive.

In summary, AI Governance in the USA presents a practical framework for organizations to be responsible innovators using AI Systems: Know your AI systems, evaluate the risks associated with them, detail and document their decisions, and continually monitor them throughout the entire lifecycle of an AI System. It would also be prudent to continue to follow the latest developments regarding the regulation and standardization of AI Systems by regularly reading “AI Regulation News in the United States.

#How AI is used in Healthcare in the USA: A Complete Guide

AI legal framework: Examine the evolving AI legal framework designed to balance innovation with public protection

The rapid growth of the legal framework for artificial intelligence (AI) is intended to promote innovation by the government and regulators while protecting the American people from potential harm caused by AI. It is unlikely that there will be a single regulatory body for all things related to AI; it is possible, however, that the AI regulatory framework will have a layered structure consisting of both the current consumer protection, civil rights, privacy, and cybersecurity laws/guidelines/standards, as well as new laws specifically created to govern the use of AI.

Monitoring developments in AI Regulation News in the United States will help you see how the AI regulatory framework will be implemented and enforced in practice.

Some of the primary reasons behind the development of the AI Regulatory Framework include reducing risks associated with the use of AI in high-impact applications, such as those affecting job opportunities, creditworthiness, homeownership, educational attainment, medical care, and access to essential community services. In addition to these issues, AI Regulation News in the United States frequently covers unfair outcomes due to bias in the decision-making process, lack of transparency in the decision-making process itself, and/or deceptive/dangerous output from an AI system.

The U.S. AI Legal Framework addresses the Complexity of the AI Supply Chain. Organizations rely on third-party models, datasets, and vendors when creating their products; therefore, this creates a collective risk to all parties involved.

As demonstrated by AI Regulation News in the United States, organizations are increasingly being held accountable for Due Diligence, including assessments of training data limitations, model performance limitations, and model security, as well as contractual agreements to audit and hold each other accountable for the use of third-party models. Therefore, the AI legal framework is affecting both the Product Development Process and Procurement/Vendor Management Processes.

There is another emerging area of focus within the AI legal framework. With increased responsibility for Accountability comes increased importance of Transparency. In some cases, organizations may be required to notify users that they are using an AI; label content produced by an AI; describe/explain how the AI reached a certain conclusion; and establish appeal mechanisms for users who disagree with an AI’s conclusions.

In most cases, Transparency fosters Trust — it allows users to identify potential problems (i.e., where the AI may have been incorrect), prevents organizations from representing AI output as something it was not, and provides opportunities for humans to intervene if necessary.

The aim of the AI legal framework is to create recognition and rewards for companies that can describe their AI systems’ purposes (the “what”), methods of design and testing (“the how”), and who will be held accountable for how these systems operate (“the who”). Since the AI legal framework is still evolving, the concept of compliance has shifted from merely checking boxes to satisfy rules to one that emphasizes flexible governance models that allow companies to adapt to evolving standards.

Companies have the opportunity to establish a strong foundation for the future with AI Regulation News in the United States, by inventorying every AI system within an organization, identifying risks associated with each AI system, and documenting all lifecycle-related business decisions. Companies will then be able to continue advancing in their AI development while being prepared for future regulatory expectations. Ultimately, the purpose of the AI legal framework is to enable both the continued advancement of AI and the protection of individual rights and interests.

US AI guidelines: Stay informed about official US AI guidelines that help organizations implement AI responsibly

There will likely be a period of time before laws are in place for the deployment of AI. That said, US AI guidelines have been established through the efforts of federal agencies, standards organizations, and public-sector purchasing expectations. Through AI Regulation News in the United States, Teams can monitor US AI guidelines to see where the momentum is going regarding US AI regulations, as well as their implementations across various industries.

Risk-based governance is a common theme in U.S. AI guidelines. Rather than treating each AI tool similarly, US AI guidelines advocate identifying AI tools that will most significantly impact society (e.g., hiring, lending, healthcare, education, and public services) and applying more stringent controls to use cases deemed at higher risk of causing harm.

Therefore, when reading about regulatory requirements from AI Regulation News in the United States, it is commonly reported that regulatory authorities and buyers desire proof of controls — not simply policy statements — and therefore, if your team follows the recommendations of US AI guidelines, you will be better prepared to establish defensive procedures prior to an incident occurring or prior to an investigation beginning.

Additionally, US AI guidelines emphasize data quality and privacy. Responsible use of AI begins with understanding what type of data is being used, whether it is sensitive, how it was collected, and whether it may contain inherent bias. Additionally, US AI guidelines recommend that documentation of the source(s) of the data used, minimization of nonessential data collection, and system security to prevent unauthorized access/leakage are best practices.

Poorly managed data has been associated with increased enforcement risks in the eyes of regulatory bodies, as noted by AI Regulation News in the United States; therefore, US AI guidelines offer value to compliance and security teams.

The US AI guidelines place strong emphasis on testing and monitoring as fundamental parts of AI governance. Consequently, the US AI guidelines encourage organizations to test and assess the quality and performance of their models before deployment and to continue this process after deployment, so they can identify whether a model has changed (drift) or developed a new behavior (misuse).

According to multiple articles in AI Regulation News in the United States, “set it and forget it” is no longer acceptable regarding the governance of AI models. The US AI guidelines provide direction on the continued oversight of deployed AI models, including definitions of which aspects of a model should be monitored, how often reviews should occur, and when to cease or roll back a system.

As with many other forms of AI governance, transparency and accountability are important components of the US AI guidelines. Transparency and accountability may take the form of informing users that they are interacting with an AI model, labeling information produced by an AI model, documenting the limits of an AI model, and allowing users to review decisions produced by the AI model that directly impact the user.

It appears from numerous articles in AI Regulation News in the United States that transparency and accountability expectations are rising across all areas of AI, particularly in high-risk and/or sensitive types of applications.

The US AI guidelines assist organizations in determining who will be responsible for each organization’s AI systems, documenting audit trail data, and developing incident response plans to comply with changing regulatory requirements. Organizations should treat US AI guidelines as “living documents”; therefore, organizations should: Follow developments in AI Regulation News in the United States. Modify their internal policies accordingly; Educate their employees and contractor personnel; Require their vendor(s) to adhere to equivalent standards.

How New Rules Plan to Keep You Safe from Risky AI

To respond appropriately to the risk posed by AI, we need to identify the specific risks it poses to society. Not all AI is created equal; there are numerous different types of AI systems. Some have a greater potential to cause damage or disruption in society than others. As cars should be regulated by type (i.e., bicycles less heavily regulated than eighteen-wheelers), AI technology should also be regulated accordingly. For example, the regulatory requirements for an AI system that suggests music would be significantly less stringent than those for an AI system responsible for managing a utility company.

To protect society from these risks, the largest and most impactful AI systems will be subject to the highest safety and security standards to prevent catastrophic failures and errors. Before AI systems that could cause severe harm to society are permitted to operate, they must demonstrate their safety to the appropriate governmental agencies.

This process of demonstrating safety would require testing similar to that conducted by automobile manufacturers for crash testing prior to permitting their vehicles to be sold and placed on highways. Under this proposed plan, developers of AI systems that pose a high level of risk to society will also be required to conduct safety and security testing before bringing such systems to market.

The test results may also be reviewed by the federal government; the tests are intended to determine whether the artificial intelligence system has any vulnerabilities. In addition to testing for potential vulnerabilities, the tests are also being conducted to determine whether the AI system can be compromised through hacking and to assess whether it can produce socially harmful bias or incorrect information.

Additionally, the proposed regulatory plan will work to develop a framework for regulating AI-based systems. It will also work to solve another problem: misinformation. The U.S. Government has announced its intention to establish an obligatory labeling requirement for all AI-generated fake media (images/videos), similar to how a photographer applies a “digital watermark” to indicate they were produced using software.

This label will allow consumers to know when something has been created using AI. While the primary purpose of this labeling requirement is to allow consumers to determine what is factual vs. non-factual, it also allows them to better understand the role that AI-based technologies play in their day-to-day decision-making (e.g., do I get a job interview?).

Will AI Read Your Resume? How the Government Aims to Protect Your Rights

The new rules are based on asking the exact same question. AI is currently being used to help decide whether people qualify for jobs, loans, and how much their insurance should cost. If we use flawed historical data to train our AI models, our AI systems will also make flawed decisions.

This is called algorithmic bias. An example of this is creating an AI model using your company’s historical data. Let’s say your company has historically hired mostly men for a specific position. Then, when a woman comes along looking for that same position, she may encounter biases introduced by the AI system because it was trained on all the hiring data from your company.

To address this issue, the U.S. Government has established that prior anti-discrimination laws remain applicable and that, regardless of whether a human or a machine made the decision to discriminate, if a business engages in discrimination in its hiring processes, it can’t get away with it. Federal agencies responsible for regulating these areas will enforce these new rules, meaning that if businesses use discriminatory methods using AI to make hiring decisions, they may face serious legal consequences.

One of the most important questions is how we can tell if an algorithm has bias. For many years, there was no requirement for “algorithmic transparency,” but today there is a demand for more transparency.

One example of regulatory efforts toward greater transparency is the idea of requiring similar types of nutritional labeling on foods; i.e., if a company uses an algorithm to make a decision about you (e.g., denying you employment), it will need to explain how the algorithm made its determination. In turn, you will know why you were denied employment, rather than relying solely on a “black box” system that cannot explain how it determined your eligibility.

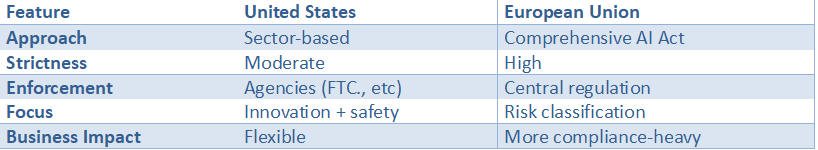

The ultimate goal here is to assure fairness through transparency to all citizens. As AI becomes an increasing part of our daily lives, U.S. civil rights regulations aim to protect Americans from discriminatory practices and require explanations of how these algorithms work. The U.S. approach, however, is vastly different from what is taking place in Europe. The EU has created entirely new laws (regulations) relative to the use of artificial intelligence.

AI Hiring Bias Protection

It’s Not Just Washington: How Your State Is Making Its Own AI Rules

The U.S. government has made it clear that it wants to develop broad guidelines for artificial intelligence. However, many other state governments have moved quickly to enact new rules and regulations governing the use of AI. Many see the individual states as laboratories, testing various ways to govern artificial intelligence, and each will offer its own approach to regulating AI.

In addition to creating a patchwork of state-by-state AI privacy laws, this trend could lead to drastically varying degrees of protection for you depending on which state you reside in. Therefore, this could result in a confusing landscape of AI-related legal issues across the United States for both individuals and businesses alike to navigate.

An example of such a state developing its own AI regulatory framework to protect residents’ PII is Illinois’s law. The law mandates that companies obtain affirmative consent before capturing or storing biometric data (such as fingerprints or facial geometry) from consumers.

The Illinois law has received widespread coverage in national news outlets, in part due to several high-profile lawsuits initiated by the State Attorney General against Apple and Facebook over the companies’ automated photo-tagging features on social media. This is an excellent example of a state creating its own regulatory framework for AI, thereby providing consumers with direct control of some of the most intimate aspects of their PII.

However, Illinois is just one of many states moving to enact consumer data privacy laws that affect how AI systems collect, store, and use consumer data. The trend shows that, at least at present, states appear to be offering the greatest degree of consumer protection for your digital life. Nevertheless, while states continue to rapidly pass laws regulating AI development, the challenge facing the country grows — finding a balance between providing the consumer protections required by AI development and allowing sufficient innovation in AI.

US vs. Europe’s AI Act: Two Different Rulebooks for the Future

The European Union (EU) has established a framework across its twenty-seven (27) Member States through the EU AI Act. That is to say, while in the US there is no single regulatory body governing the use of AI, but rather a multitude of agencies each developing their own guidelines and standards for using AI, the EU will be governed by a single overarching regulatory framework.

As such, this represents a historical first in establishing a single regulatory approach to AI governance internationally.

In other words, the more restrictive the regulation of an AI system, the higher the potential risks posed to individual(s).

Another unique feature of the EU’s regulatory approach is the creation of a “traffic light”- style classification system used to assess the degree of risk posed by various forms of AI systems.

- Red light means no-go for your AI. Any AI that you believe may jeopardize human rights should be stopped. An example would be a government-run social credit program that ranks citizens’ behavior.

- Yellow light is high-risk. If an AI has the potential to endanger someone’s life, it will need constant monitoring. This type of AI includes systems used in job applicant screening, loan approvals, and other critical infrastructure, such as water treatment.

- A green light means go ahead with minimal regulation on many of the AI applications we use today. These can include: video game development, email spam filters, and so forth.

Overall, the main differences between the U.S. and EU comparative analyses of AI Acts stem from differing approaches to creating a comprehensive regulatory framework for AI. The EU is developing one unified, very detailed, and overarching regulatory framework for all types of AI systems, whereas the U.S. is developing new regulatory frameworks based upon already established regulatory frameworks, with each governmental agency having the authority to create its own regulatory framework regarding the use of AI in each of its respective regulatory jurisdictions.

The flexibility to regulate AI through this decentralized approach is an essential aspect of how AI will be regulated in the United States. However, it should be noted that factors beyond the federal level could impact or influence AI regulation news in the United States. For example, your State government may become engaged in the regulatory process surrounding the use of AI.

US vs EU AI Regulation

Impact on Business and Innovation: A Balancing Act

There are conflicting perspectives regarding the level of regulatory control for AI. A number of stakeholders have expressed concerns about the governance of AI as it relates to societal protection, while others have expressed concern that overly restrictive regulation may impede AI innovation. Consumers need to feel assured that AI-based products are both safe and equitable. When consumers perceive AI-based products as unsafe and/or inequitable, then the likelihood that consumers will use such products decreases dramatically.

Therefore, if the perceived safety and equity issues associated with AI prevent consumers from using it, the positive impacts of AI will never be realized. The negative impact of AI regulatory oversight on innovation can be viewed as a matter of striking a delicate balance between creating a safe environment for society (i.e., by reducing the risks associated with AI) and maintaining the creativity and momentum within the field of AI innovation.

A number of companies face challenges because there are currently no established rules or regulations governing how companies should operate when developing and using AI technologies. Many factors exist that create uncertainty for companies attempting to develop and implement AI-based technologies. Operating in a highly uncertain regulatory environment increases a company’s exposure to significant risk. This increased risk can discourage companies from investing significant capital in R&D to develop new technologies.

A stable, predictable market for AI-based technology will exist when there is a clear and consistent regulatory structure. When there is a consistent regulatory framework, businesses will know what to expect and what standards they need to meet, enabling them to produce products that customers trust. Therefore, the regulatory agency’s role in creating a stable market through regulation will help answer how AI-based regulatory oversight will impact business growth.

Currently, regulatory agencies do not clearly define the boundaries of the regulatory oversight process for AI-based technologies. To address this issue, regulators are developing concepts for “regulated sandboxes,” or “controlled environments,” that allow companies to experiment with new technologies while under close regulatory monitoring during development. Companies can use regulated sandboxes to conduct field trials of new AI-based technologies and assess potential issues before full-scale product releases.

The government can also obtain valuable data on the success and failure of emerging technologies. As a result of this collaborative approach to regulation, regulatory oversight of AI is expected to become one of the most innovative approaches to regulating emerging technologies.

AI Regulation Impact on Business

What to Watch For: 3 Key AI Regulation Trends That Will Affect You Next

From all we’ve discussed so far, one thing is clear – no single bill or law will govern how the US government uses Artificial Intelligence. Think of it this way – the US government has created a playbook with many chapters. The White House has provided the overall structure of the playbook.

The Federal Agencies have provided details on what they need to do in each Chapter. The State Governments have defined their own rules for the playbook as it applies in their individual jurisdictions. From reading the headlines you’ve read over time about AI regulation news in the United States, you’re now aware of the overall structure of the playbook and how AI will be governed in the United States.

Now that you know some of the key aspects of the regulatory environment around AI in the US, here are three items you’ll want to continue tracking in both your personal and professional lives.

- Labels and Watermarks: Clear labels and watermarks indicating the content was generated using AI will appear throughout various forms of content, so you know when you are viewing a creation generated by humans versus machines.

- A Demand for Explainable AI: In many cases, you will have the right to explain why an algorithm has made a significant decision affecting your life, such as getting approved for a loan or receiving a job offer.

- Legislation in Congress: Bipartisan coalitions of Senators and Representatives will begin to convert the White House’s recommendations into enforceable, binding legislation.

In addition to the use of clear labels and watermarks, there will also be a greater demand for AI to provide explanations for decisions it makes based on algorithms. Additionally, legislation will be introduced in Congress requiring companies to comply with the White House’s recommendations.

Therefore, with this information, you can recognize and understand these emerging trends across news and articles about AI, and participate in discussions about how we envision our society evolving with AI.

Therefore, one of the best ways to support the growth of a more open, more accountable AI industry is to remain a more informed consumer and ask questions about the new tools you encounter, request transparency about them, and even, as an informed user, you can help create an AI industry that is more open, more accountable and ultimately beneficial to all. (END) I added ‘even’ at the end to provide some flexibility at the end. The final sentence could easily be cut and still work. However, I thought adding that word helped to give a little extra feeling of what a good user experience might feel like.

Future AI Regulation Trends

Conclusion

AI regulation news in the United States is entering a new phase of regulation, driven by a combination of White House mandates, Federal guidelines, and State initiatives, rather than a single overall law.

The New Age of Artificial Intelligence Oversight will focus on areas with the greatest potential for adverse societal consequences and, therefore, require additional safety tests, enhanced security, and clearer lines of responsibility for high-impact systems. While Policymakers are attempting to preserve core individual rights through the application of existing civil rights and anti-discriminatory legislation, as well as requirements for increased transparency whenever AI impacts employment opportunities, creditworthiness, housing, health care, or education.

Regulatory Developments related to AI will inform users at the end of the process about both the presence of AI-generated content and the mechanisms by which large-scale automated decisions are made. Users will be able to challenge automated decisions they believe are unfair.

Similarly, Organizations will see a similar set of expectations: the responsible use of AI is now mandatory. With the implementation of organization-wide AI projects, documentation of data sources and assessments of potential biases and reliability will become required. Human Oversight of Automated Decisions will also be a requirement. Additionally, continuous assessment of organizational AI systems after launch will be a standard expectation (and no longer a competitive advantage).

In the near term, we expect an increase in labeling requirements, explainable AI requirements, and legislative efforts to codify present policy into actionable statutes. For Consumers to help shape the future of Safe & Trusted Artificial Intelligence Solutions, we encourage continued education on the use of AI and ask that you provide input by asking intelligent questions to aid in the development of AI Solutions.

FAQs

1) Is there one single AI law in the United States?

Not yet. The United States is currently developing its AI oversight framework through a combination of White House directives (such as executive orders) and enforcement by federal agencies through applicable existing law. State legislatures have also enacted legislation addressing specific AI risks.

2) What does the White House AI Executive Order actually do?

This has provided direction to the federal government on AI oversight priorities, including the development of guidance, standards, and enforcement methods related to AI safety and security, the protection of civil rights and privacy, and continued U.S. leadership in AI research and development.

3) How will AI rules affect hiring, lending, and other high-stakes decisions?

The federal government emphasizes that the use of AI does not supersede current anti-discrimination and consumer protection laws. As such, companies may be required to implement additional methodologies to test for bias, document the results of these tests, and provide consumers with an explanation of how automated decisions were made and/or the opportunity to review these decisions.

4) What are “high-risk” AI systems, and why do they face stricter scrutiny?

High-Risk Systems are defined as those systems that could significantly impact people’s lives and safety (for example, health care support systems, critical infrastructure, and employment screening). According to the article, high-risk systems will be subject to enhanced testing requirements, security measures, and oversight before and after deployment.

5) How do U.S. rules compare to the EU AI Act?

The EU AI Act is a single, comprehensive law that categorizes all AI by the level of risk involved, ranging from completely acceptable to prohibited. The U.S. approach to regulating AI has been decentralized to date; it has adapted existing law and allowed each agency and state to develop “guardrails” within their respective domains.