Quantum Computing is developing rapidly and may be revolutionary for numerous scientific disciplines and technologies. However, numerous barriers must be overcome for quantum computing to reach its full potential.

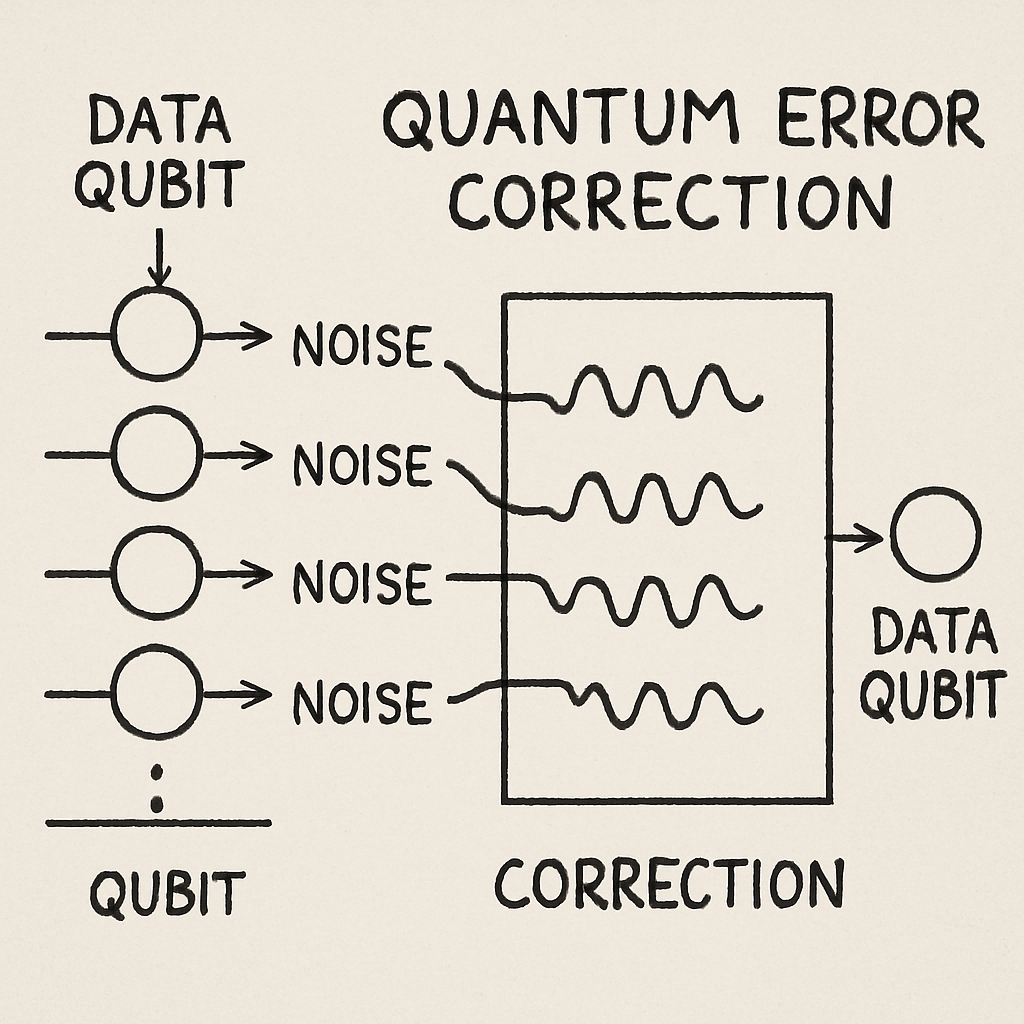

One such barrier is quantum error correction. Due to the nature of quantum systems, errors can occur in quantum computations, disrupting the computation itself. Therefore, to create reliable quantum computations, methods to protect quantum information from errors are required. This includes the development of error-correcting codes that will allow for the creation of scalable quantum computers.

Qubit error correction is currently one of the most actively studied areas of quantum computing. The purpose of this area of study is to develop codes that will allow for the encoding of information on a group of qubits so as to allow for both the detection and correction of errors that have occurred during the quantum computation. As long as a fault-tolerant quantum system exists, it can run algorithms without performance degradation due to errors.

In addition to developing reliable and resilient quantum systems, it is necessary to develop methods to protect the integrity of the quantum information being processed by the system over time, enabling long-term processing.

A major factor enabling scalable quantum systems has been recent advancements in error correction. These advancements can make a positive contribution to the field of quantum computing in the short term.

Classical error correction is a source of inspiration for the development of quantum error correction. However, classical error-correction techniques require modification to account for the differences between classical and quantum errors.

Advances in quantum error correction will directly affect the future of quantum computing. The ability to reliably correct errors will be a requirement for any meaningful application of quantum computers. Advances in this area are a key step in achieving quantum supremacy.

Summary

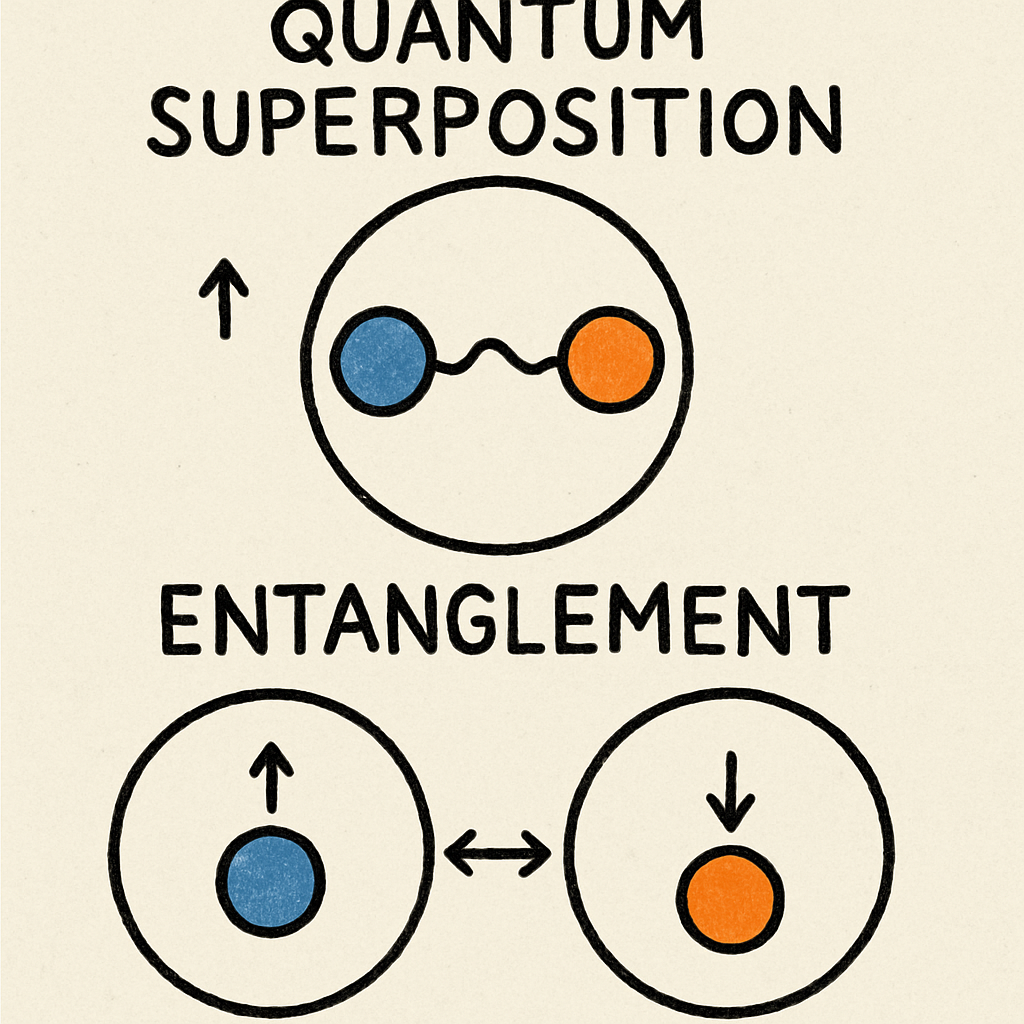

“The future of scalable quantum machines with quantum error correction” highlights why there exists a need for error correction to ensure the practicality of quantum computing. The article begins by explaining the potential capabilities of quantum computers (superposition and entanglement), which could be used to solve problems in fields of molecular simulation and complex optimisation. The authors emphasise that due to the low decoherence rate of qubits, they have extremely low thresholds for noise that can disrupt their operation.

The article then gives examples of how small amounts of interference can lead to “bit flip” or “phase flip” errors, or a combination of both, which can cause cascading failures in a computational process. After describing the unique vulnerabilities of qubits and the different ways they can fail, the article outlines both classical and quantum methods for correcting those errors. Classical error correction uses redundancy to identify errors. In contrast, the authors describe the major challenges in developing a quantum error correction system. Those challenges are primarily caused by the “no-cloning” theorem and the fact that, when attempting to directly measure quantum states, they lose their quantum properties.

To address the previously mentioned challenges in developing a viable quantum error-correction system, the authors describe the development of quantum error-correction codes. Quantum error correction codes store one logical qubit across multiple physical qubits and use ancillas to detect errors in the form of syndromes without having to measure the encoded quantum states. The article also reviews several prominent families of quantum error-correction codes, including surface codes, topological codes, and quantum LDPC codes. The authors also explain why surface codes are especially attractive because they can perform localised error detection based on a grid.

#Quantum AI Simulation: Solving 7 Breakthrough Problems Classical Computers Can’t Model

Recent developments have led to improved code design and better hardware, enhancing coherence and connectivity. Additionally, researchers are using new techniques such as machine-learning-based decoding to improve error-correcting capabilities in quantum computers. Recent developments in improving the above-mentioned areas are connected to fault-tolerant quantum computing, which uses logical qubit encoding and error management to maintain proper operation at or below error thresholds.

Quantum computing is currently limited by high qubit overhead, low gate fidelity, and scalability. Future development paths discussed in the paper include improved resource efficiency, closer integration of quantum error correction with quantum hardware, and greater noise isolation. The authors believe that further breakthroughs in quantum error correction will lead to reliable, scalable quantum devices and ultimately allow for the realisation of the true potential of quantum computing.

Quantum Computing: Quantum computing unlocks new computational power beyond classical limits

Quantum Computing represents a completely new method for processing information, utilising qubits (quantum bits) that exist in a state of superposition and can become “entangled.” Unlike Classical computers, which evaluate only one option at a time, qubits can represent multiple options simultaneously, enabling the simultaneous solution of problems.

There is certainly potential to accelerate some processes, and potentially most notably, quantum computing could greatly enhance capabilities in areas such as simulating chemical properties, optimising complex systems, and accelerating parts of cryptography and machine learning. For those who develop road maps, quantum computing offers opportunities for insight through very large-scale computational experiments that are difficult to conduct on the world’s largest current supercomputers.

Classical computers utilise the fact that each bit is either 0 or 1. Because we know how to manage errors by redundancy, it is typically simple to produce reliable classical computing hardware.

Quantum computing hardware, although theoretically possible to build using quantum processing units (QPUs), is extremely challenging to develop due to the sensitivity of qubits and their susceptibility to external factors, such as temperature fluctuations and electromagnetic interference. As well, each time you perform a measurement on a qubit, it causes the qubit to collapse into one of its two states, thus destroying the information in the process. This means that when you build a large-scale Quantum Computer with thousands of qubits, all of the individual errors will accumulate and become an issue related to the reliability of the long computations you want to run.

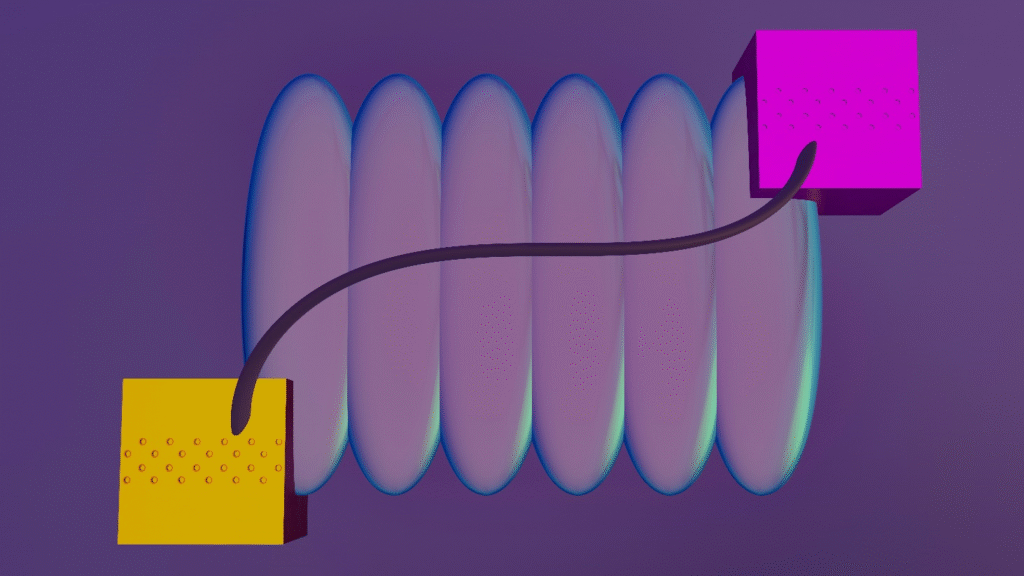

This is where Quantum Error Correction comes into play. Quantum Error Correction protects the information stored in the QPUs while enabling long computations. Quantum Error Correction is achieved by encoding a single logical qubit onto multiple physical qubits. Since the information contained in the encoded qubits is not lost until the physical qubits are measured, Quantum Error Correction is able to check if there has been any corruption of the information contained in the encoded qubits.

Through periodic checks of specially designed parity measurements, Quantum Error Correction can determine the type of corruption that occurred and then take appropriate measures to correct the original computation.

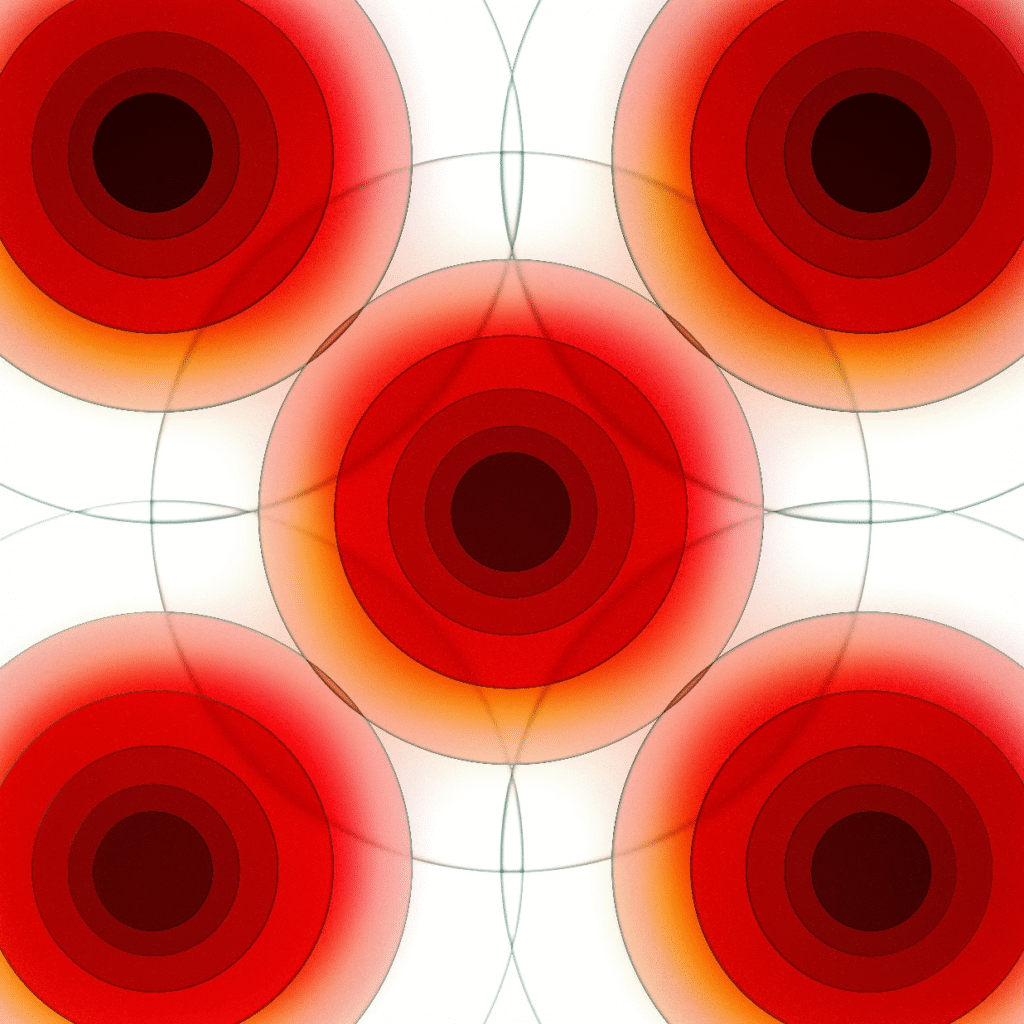

Surface codes and similar Quantum Error Correction schemes have proven to be the most popular methods for developing Quantum Computers. Surface codes and other similar Quantum Error Correction schemes are primarily popular due to the fact that they allow for local interaction among the qubits. They are also popular because they provide exponential suppression of error probability as the size of the Quantum Computer increases.

Quantum computing can only live up to its potential when it is fault-tolerant. When a quantum processor has both quantum error correction and sufficiently reliable logic gates, it can continue operating for millions or tens of billions of operations without having significant amounts of logical errors. The ability to operate for this length of time will allow us to run much longer and much more complex quantum algorithms than we can today, enabling us to solve problems such as drug and battery development, quantum communications, and optimisation.

We have a couple of small examples of where a quantum processor can outperform a classical processor in a controlled environment. But until we increase the coherence time of our qubits, increase the speed at which we measure, and develop more sophisticated software to control our quantum processors, we won’t be able to leverage the large-scale computing power of a quantum processor.

In the near term, we will see early versions of this technology used in hybrid workflows. A classical computer executes all parts of the workflow, and the quantum processor executes only the parts that are difficult to execute classically. As you can tell from the last two sentences, I am saying that quantum computing can solve problems that are significantly larger than those that classical computers can.

It only happens when both the hardware and software advance at the same time. Improving the design of the materials, control electronics, calibration, and compiler for a quantum processor will reduce the system’s overall noise, and the reliability provided by quantum error correction will enable us to create scalable quantum processors.

The Foundations of Quantum Computing and the Need for Error Correction

Quantum computing uses an alternative to classical methods, using “qubits” instead of “bits” as its fundamental unit of data. Qubits are unique in that they can exist in multiple states simultaneously (superposition). Quantum computers can also create entanglement between qubits. When two or more qubits are entangled, the state of one qubit affects the state of another qubit regardless of the distance between them. This creates the potential for extremely powerful exponential computation, which would exceed the capabilities of any classical system.

Due to the characteristics of qubits mentioned above, quantum computers have the capability to calculate complex problems at an extremely rapid pace. They can factor large numbers rapidly and can efficiently model molecular behaviour.

Errors can arise in quantum systems in two ways. Decoherence results in the loss of a qubit’s quantum state. Noise will result in the instability of a qubit. Irrespective of whether it arises from decoherence or noise, either form of error can affect the computation being performed and therefore reduce its reliability.

To counteract these errors, it is essential to implement error-correcting codes to protect quantum information and enable reliable computations. To develop large-scale quantum machines, qubit error correction is critical.

Several types of multi-error-correcting methods have been implemented by researchers. The most well-known are:

• Surface Codes: Surface codes generate a pattern of qubits on a two-dimensional lattice to maximise the error-detecting capability of the code.

• Topological Codes: Topological codes take advantage of the “topology” of the quantum states to protect the encoded information.

• Quantum LDPC (Low Density Parity Check) Codes: Quantum LDPC codes were derived from their classical counterparts and include many of the same properties as Low Density Parity Check codes.

The error-correction methods described above offer a significant opportunity to build fault-tolerant quantum computers and improve the fidelity of quantum algorithms by effectively handling computational errors. As researchers continue to advance the state of the art in quantum computing, the need for highly accurate error correction will grow. Currently, error correction is considered one of the most significant barriers to the development of large-scale quantum computers.

Researchers anticipate that advancements in error correction may also help alleviate some of the constraints associated with current quantum computing implementations. Ultimately, the improvements in error correction will enable researchers to fully exploit the power of quantum computing. Advancements in error correction will facilitate the development of larger, more reliable quantum systems, thereby enabling the widespread use of quantum computing for a wide variety of scientific disciplines.

Quantum Information: Quantum information represents data stored in delicate qubit states

Quantum Information exists in qubit states, which are fragile and exist in superposition states. Classical information is always in a “safe” state, either 0 or 1. The probability amplitudes and relative phases of the qubit contain all the data. It is the phases that give meaning. They provide the correct interference patterns for finding solutions using quantum algorithms and determine the degree of correlation in entangled systems.

It is the same fragility that gives qubits the capability of performing calculations so much faster than classical computers, which also provides the method of disruption for qubits. The potential disruptions include heat, stray electromagnetic radiation, pulses used to control the qubits but applied imperfectly, and even the smallest defects in materials, all of which may disrupt the qubit’s state and thus degrade the qubit’s phase relationship. This is known as decoherence.

The inability to make copies of qubits as one would copy a file (the no-cloning principle) presents a new paradigm for protecting quantum data. Rather than making a backup, this paradigm requires one to consider fundamentally different methods for protecting quantum data. Measuring a qubit too soon collapses it to a single state, eliminating the quantum information intended to be preserved. This presents a new fundamental engineering problem: how can errors in qubit data be detected and corrected when the quantum state encoding the data is unknown?

#Understanding Quantum Computing: A Beginner’s Guide You Must Read

Quantum error correction is a technique to protect computation from decoherence (noise). Quantum error correction works by encoding one “logical” qubit using multiple “physical” qubits. In addition, after each operation, the control system measures the “syndrome,” which indicates the most likely error type (phase flip, bit flip, or both). Because the syndrome measurement does not reveal the logical state, it allows repeated application of the syndrome measurement process to monitor the evolution of the error over time and to apply corrections to maintain the logical information stable during the execution of long circuit sequences.

Surface codes are a class of quantum error-correction methods with characteristics that appeal to experimentalists. For instance, surface codes are based on local interactions and therefore can significantly reduce the number of logical errors generated per cycle as system size increases.

Quantum information goes far beyond computing. Entanglement is used to create a correlation between two particles that is distributed over large distances. This property has been utilised for applications such as quantum key distribution (QKD) and quantum teleportation. Phase information has also been used in quantum sensing to measure the accuracy of time intervals, the strength of fields, and the velocities of objects. These applications have in common that they depend on the coherence of the phases of the quantum states; when phases become random or signals are degraded by noise, performance degrades.

At present, systems operate in a noisy environment; therefore, quantum information remains stable only for very short times. It is necessary to minimise the number of layers in the algorithms being executed (i.e., make them shallow) or to use some form of error mitigation.

To improve this situation, we need to find ways to better stabilise the materials and devices being used; develop faster, more accurate measurement and feedback processes; and reduce the rates at which errors occur so that the overhead associated with correcting them is manageable. As the hardware, control software, and coding techniques continue to evolve, the current laboratory-based quantum information resource will become a reliable platform for developing scalable quantum technology.

Understanding Quantum Errors: Sources and Impact on Computation

Most errors occurring in quantum computing happen due to the interaction of the qubit with the environment in which it exists. This interaction leads to decoherence of the qubit, causing it to lose its quantum state. In addition to decoherence, a second type of error arises from noise in quantum systems; it stems from fluctuations in qubits, which make them unstable and introduce inaccuracies in calculation outcomes.

The sources of noise in quantum systems are varied and include electromagnetic interference and thermal fluctuations. Quantum errors are harmful to quantum computations. These errors prevent reliable use of the required information to perform quantum algorithms.

As previously mentioned, there are many different types of quantum errors. A small error at one point in the system can propagate throughout the system, resulting in a significant deviation from the desired outcome. As such, in order to develop effective error-correcting codes, it is essential to understand the types of errors that occur.

Researchers classify errors that occur in quantum systems into three categories:

• X-type errors (or bit-flip errors): These are when the state of a qubit changes from 0 to 1 or vice versa.

• Z-type errors (or phase-flip errors): The errors occur when there is an interference pattern between two possible states.

• Depolarization errors (or degradation): This can be thought of as an equal mix of both the X-type and Z-type errors.

Because each type of error requires a different mitigation technique, multiple approaches will likely be necessary to address all errors.

Quantum Error Correction aims to preserve the accuracy of quantum information throughout quantum computations.

By detecting and correcting errors in quantum systems, you will help ensure that quantum calculations achieve sufficient fidelity to realise the full potential of quantum computing.

Ongoing technological advancements to improve error detection and correction in quantum systems will support the development of robust and scalable quantum computers.

by Steve Johnson (https://unsplash.com/@steve_j)

Types of Quantum Errors

| Error Type | Description | Cause |

|---|---|---|

| Bit Flip | Qubit flips state | Noise/interference |

| Phase Flip | Phase changes incorrectly | Environmental disturbance |

| Decoherence | Loss of quantum state | Interaction with environment |

| Gate Error | Faulty quantum operation | Hardware imperfections |

Example: A qubit exposed to thermal noise may lose coherence, corrupting calculations.

Source:

- Google Quantum AI

https://quantumai.google - MIT Quantum Research

https://web.mit.edu

Classical vs. Quantum Error Correction: Key Differences and Challenges

Although both Classical and Quantum Error Correction aim to protect against Disruptive Errors that can destroy or Distort Information Integrity, they take fundamentally different approaches to achieving this goal due to the distinct physical properties of each system.

The primary method for Classical Error Correction is to operate on Binary (0s & 1s) Data and employ methods such as Parity Checking and Hamming Codes. These methods have been demonstrated to effectively detect and correct errors via redundancy.

Quantum Error Correction has many additional layers of complexity compared to Classical Systems because Quantum Information exists in a Superposition (of probability and phase), whereas classical information exists in a single, definite state. Thus, the ability to detect and correct errors using Quantum Error Correction Methods will be substantially more difficult than it would be using Classical Methods.

Additionally, the “No Cloning” Theorem of Quantum Mechanics prohibits the creation of an exact copy of an arbitrary Quantum State. This prevents one of the Major Strategies used in Classical Error Correction (redundancy) from being used in Quantum Error Correction.

There are also a number of key differences in the type of information that Classical and Quantum Error Correction address, and how those two types of systems would go about correcting the errors that occur:

• What Type Of Data Do Both Classical And Quantum Error Correction Address (Bits Or Qubits)?

• How Different Are Classical Errors From Quantum Errors? Classical errors are generally simpler to understand and work with compared to quantum errors. Quantum errors involve many complications compared to classical errors, such as superposition and entanglement.

• How Do Each System Correct Errors? Classical systems tend to use redundancy directly to detect errors. Quantum systems are limited by the No-Cloning Theorem, and therefore have no other choice but to indirectly measure the amount of an error that occurred in the qubit.

Quantum errors are capable of becoming “tangled” with each other in what are known as Entangled Error States, which will necessitate the development of new and more complex mathematical tools to properly handle them when implementing Quantum Error Correction.

Quantum Error Correction remains an active area of research, particularly regarding its practical application. Therefore, developing effective methods to implement Quantum Error Correction is necessary to achieve practical reliability in our Quantum Computing Systems.

Classical vs Quantum Error Correction

| Feature | Classical Error Correction | Quantum Error Correction |

|---|---|---|

| Data Type | Bits (0 or 1) | Qubits (superposition) |

| Error Type | Bit flips | Bit + pahse flips |

| Copying Data | Allowed | Not allowed (no-cloning) |

| Detection | Straightforward | indirect Measurement |

| Complexity | Low | Extremely high |

Insight: Quantum error correction is fundamentally harder due to fragile qubit states and no-cloning rules.

Source:

- IBM Quantum Learning

https://quantum.ibm.com - Nature Quantum Information

https://www.nature.com

Quantum error correction: It protects fragile quantum information, making scalable quantum machines stable and reliable

The goal of Quantum Error Correction is to mitigate the effects of instability in quantum computers as they grow larger. Quantum computers can perform calculations much faster than classical computers due to the unique properties of qubits, which are capable of existing in multiple states simultaneously (known as superposition) and also have the property known as entanglement, where two or more qubits can be correlated to such a degree that measuring one qubit will instantly affect the others.

However, as these qubits are so sensitive to the environment around them, even slight disturbances — such as heat, electromagnetic interference, poor calibration, crosstalk, and defects within materials — can cause changes in the state of a qubit. In addition to altering a qubit’s state, disturbances can result in one of several types of errors: a qubit can either undergo a bit-flip error, a phase-flip error, “leak” into a state it was not originally meant to be in, or provide an incorrect measurement. As more errors occur in a system, the less reliable the qubits will be to interfere with each other and produce accurate results.

Quantum Error Correction enables the use of large-scale quantum systems.

Quantum Error Correction employs redundancy to encode a single logical qubit onto multiple physical qubits. To accomplish this, the logical qubit is split across all of the physical qubits so that each physical qubit contains partial information about the logical qubit. Then, with the aid of helper qubits, the machine will perform a series of specific checks (called syndrome measurements) on the physical qubits. These checks are intended to identify whether an error has occurred and, if so, the nature of that error without identifying the actual logical value being computed.

Once all relevant syndrome data has been collected from the physical qubits, the machine’s classical decoder will analyse it to determine the most likely error pattern. The machine can then use the corrected data to correct errors (when possible), track them, and compensate for them during subsequent operations. The machine will repeat this process (detect-decode-correct) continuously until the machine stops operating, thereby preventing even minor errors from escalating into catastrophic system failures.

One of the chief objectives of quantum error correction is to achieve fault-tolerant operation. This objective is achieved by operating below a predetermined error threshold while increasing the size of the quantum code, thereby greatly decreasing the probability of a logical error. One common method for creating a fault-tolerant quantum computer is to use surface codes. Surface codes have several inherent benefits, such as the ability to operate via localised qubit interactions and the ability to be constructed in a highly structured way; however, their construction requires a large number of qubits, high-speed measurement capabilities, and continuous real-time control.

In conclusion, quantum error correction represents the “reliability layer” which translates the unreliable qubits utilised in quantum computers into reliable “logical building blocks”. As qubit reliability increases and codes/decoders become more efficient, quantum error correction will be the “core enabling capability” for long-term reliable and accurate computations on scalable quantum computers.

Core Principles of Quantum Error Correction Codes

Quantum computers are not possible without using quantum error correction. Quantum error correction allows us to prevent the decoherence and quantum noise from destroying our fragile quantum states. It is required to ensure that the quantum information remains accurate throughout all processes in the quantum computer.

The most common way to implement quantum error-correction methods is by using redundancy. Redundancy in quantum systems is achieved through encoding the quantum information of a single logical qubit into multiple physical qubits. Through redundancy, we can detect and correct any errors that may have occurred in individual qubits, thus protecting the original quantum information encoded.

Another fundamental component of quantum error correction is entanglement. Entanglement is a property of quantum systems that connects two or more qubits together; If an error occurs in one of the qubits in an entangled system, then the error will be present in both qubits. Therefore, entanglement helps maintain the integrity of the quantum state.

There are three types of errors that can occur while a quantum computer is operating that are corrected using a quantum error correction code:

1. Bit flip errors: The effects on how the qubits’ basis will affect the computation.

2. Phase flip errors: The effects on the phase of the superposition states.

3. A bit/phase flip error: This would be essentially a combination of the first two.

Quantum error-correction codes also use an “ancilla”. An ancilla is an additional qubit that contains no data, it is called an “ancilla”, and it is used by the code to detect/monitor errors as they occur in the actual qubits with data (called “data” qubits).

by Marek Pavlík (https://unsplash.com/@marpicek)

Quantum Error Correction through Surface Codes: Surface Codes have been used as an alternative approach to Quantum Error Correction. Surface Codes utilise a 2D grid layout with qubits arranged such that local operations within each sub-grid can be implemented to detect and correct errors. Thus, by utilising local operations for error detection and correction, surface codes enable greater computational efficiency.

Continued Research into Quantum Error Correction is being conducted to establish more feasible and efficient methods for correcting errors in Quantum computers. It is essential to understand the principles of Quantum Error Correction in order to build fault-tolerant quantum machines. Additionally, understanding these principles will help us improve reliability, reduce errors, and increase performance as we gain more knowledge about the Quantum Information Universe.

Error Correction Codes: Error correction codes detect and fix computational inaccuracies efficiently

Quantum Computers suffer from errors caused by noise, as do Classical computers. To protect information in Quantum Computers against noise-induced errors, we have developed quantum error correction codes. Like Classical Computing and Communication Systems, Quantum Error Correction Codes add redundancy to information, allowing receivers to detect errors and, in many cases, correct them. The simplest example of creating a redundant version of a bit is to repeat the bit n times and then perform a majority vote on the n copies. However, as with all simple examples of implementing error-correction codes, there are better ways to create more reliable, much more efficient error-correction codes.

To do this, we can create parity checks that allow us to determine where the most likely errors are and minimise overhead. Quantum Error Correction Codes provide a means of reducing the number of errors that occur in noisy physical environments. There are many different families of Classical Error Correction Codes, including, but not limited to, Hamming codes, BCH codes, Reed-Solomon codes, Low Density Parity Check (LDPC) codes, convolutional codes, and turbo codes.

Each of these families of codes provides a trade-off among the overhead required to encode the information, the number of errors they can correct, their decoding speed, and the types of noise they are best suited to handle. Most modern systems, including cellular networks, long-distance space communications, and data storage, require fast decoders capable of correcting errors in real time using either probabilistic inference or iterative message passing.

Protecting Quantum Machines from errors is more challenging than protecting Classical computers. This is because Quantum Bits (qubits) experience both bit flip errors and phase flip errors. Furthermore, directly measuring the data contained within a qubit destroys it.

Thus, Quantum Error Correction provides a way of protecting delicate Quantum States from errors in a way that does not reveal the state of the encoded qubit. Rather than measuring the encoded qubit to check if an error has occurred, the system uses “parity-like” checks, called syndromes, that indicate whether an error has occurred and, if so, what type; these measurements preserve the encoded quantum information.

Surface Codes and similar codes can be “local,” providing significant reductions in the logical error rate for large code sizes when the physical qubits have very low error rates.

Quantum Error Correction gives an approach to transform noisy systems into ones where accurate long calculation results can be obtained – much like how the pathway for error correction codes has been expanded.

Classical vs. Quantum Error Correction: Identifying the code best suited to match your noise model, and identifying the limitations of your system will enable you to compare the efficiencies of a selected code – Classical or Quantum, and for quantum computing, gate fidelities, measurement speed, connectivity, and the number of qubits available. As each of these improves, the overhead of Quantum Error Correction decreases, and eventually, larger, fault-tolerant computations become feasible.

Note: Surface Code and local code are synonymous terms. I would have preferred it had said “local quantum error correction” rather than just “local.” However, this term does appear in literature.

Note: Quantum Error Correction is being developed by many organisations, including Google, Microsoft, IBM, Rigetti Computing, IonQ, and others.

Qubit Error Correction: Qubit error correction protects fragile quantum bits from environmental disturbances

The possibility of constructing a functional quantum computer would appear to be unlikely without a means of correcting errors in qubits. The reason for this is based upon the fact that, in a physical environment, qubits continuously interact with their surrounding environment. As a result of these interactions, the coherence of the qubit’s state can be lost due to thermal fluctuations, stray electromagnetic radiation, imprecise application of microwave pulses to manipulate the qubit, unintended excitations to higher energy levels, and changes over time.

There are two types of errors that can occur as a result of these interactions: (a) “bit flip” errors, which appear as a change from 0 to 1; and (b) “phase” errors, which degrade or randomise the relative phase needed for interference effects that allow for quantum computation.

Quantum computers cannot use the same mechanisms to correct errors as classical computing systems. Classical computing systems can simply use “bit duplication” and “majority vote” to correct errors. However, because quantum computing involves collapsing the superposition of a qubit when measured, it is not possible to duplicate a qubit’s quantum state.

To overcome these restrictions, we use quantum error-correction techniques to represent information about a single logical qubit using multiple physical qubits. We encode information regarding a single logical qubit in the states of multiple physical qubits so that when we measure the value of the physical qubits, we can determine if an error has occurred, but not the quantum state of the logical qubit.

To maintain the reliability of every quantum computer, we continue to monitor the patterns in the syndromes by using ancillas, use these patterns to identify whether a phase flip or a bit flip occurred on an individual qubit, and never find out which of our logical qubits was affected. Based on past syndrome readings, the decoder determines the best correction (or frame update) to restore the logical qubit to its initial state. Therefore, there is no conclusion to the detection, decoding, and correction loop — it will continue to operate until the quantum computer completes its final calculation.

Quantum Computing Stability Via Quantum Error Correction. Researchers are currently developing roadmaps for quantum computing and are primarily focusing on the surface code for their approach to quantum error correction. The surface code creates a two-dimensional array to position the qubits and applies a local check to each qubit. The surface code has compatibility with many emerging architectures that will be used to physically implement a quantum computer. However, the surface code comes with a tradeoff. A low logical error rate for the surface code requires a high number of physical qubits per logical qubit.

The surface code also requires quick measurement and fast feedback. As the performance of the physical components being developed for the quantum computer (increasing coherence times, higher gate fidelity, and faster readout speed) improves, the overhead required to support fault-tolerant operations will decrease, and the practicality of performing fault-tolerant operations will increase.

Leading Error Correction Codes: Surface Codes, Topological Codes, and More

Quantum Error Correction Techniques

Quantum Error Correction utilises novel coding schemes that safeguard quantum bits (qubits) from damage. Two prominent quantum error correction schemes are the Surface Code and Topological Codes, both of which protect the integrity of quantum information.

One reason surface codes have become the preferred choice for error correction is their tolerance to errors. Surface codes utilise an arrangement of qubits laid out in a grid. The interactions between each qubit and the qubits surrounding it allow for local detection of errors. Therefore, surface codes allow for highly efficient error correction.

Topological codes extend the functionality of surface codes. A topological code establishes a multidimensional spatial relationship among qubits, encoding information in the qubits’ geometric properties. Because the information is encoded into geometric properties, topological codes provide a level of protection against certain types of errors. Additionally, topological codes possess a high degree of built-in stability. This makes them less dependent on continuous error correction than surface codes.

The primary features of the above mentioned quantum error correcting codes include:

• Localised Error Detection: Errors are localised to specific regions (local areas) and do not affect entire systems (global areas).

• Geometric Coding: Information is encoded into the geometry of the system (how it is physically structured) rather than into the properties of the individual qubits.

• High Robustness: Highly resilient means that they are able to identify and correct multiple different types of errors.

by Shubham Dhage (https://unsplash.com/@theshubhamdhage)

Continued development of new code is underway to further increase the potential for error correction. Development of Hybrid Codes (combining elements from Surface Codes & Topological Codes) is underway as researchers seek to leverage the strengths of each type.

Development of Bosonic Codes (utilizing continuous values to represent a new paradigm for error correction), will add an additional dimension for developers to utilize, potentially capable of providing greater protection against Quantum Noise.

The continued development of error-correcting codes will make it feasible to build large-scale quantum computers. Today’s developments will provide the building blocks for the first practical quantum computers.

Codes developed here will provide a solid basis for operating quantum computers. These codes will enable developers to build reliable computing platforms that further advance the state of the art in quantum technology.

Quantum Error Correction Codes Comparison

| Code Type | Strength | Limitation |

|---|---|---|

| Surface Code | High fault tolerance | Requires many qubits |

| Shor Code | First QEC method | Complex implementation |

| Steane Code | Efficient encoding | Limited scalability |

| Topological Codes | Robust to noise | Experimental stage |

Insight: Surface codes are currently the most practical path toward scalable quantum systems.

Source:

- IBM Quantum Documentation

https://quantum.ibm.com - Microsoft Quantum Research

https://quantum.microsoft.com

Breakthroughs in Qubit Error Correction: Recent Advances

Quantum Computing Has Also Seen Several Important Advancements In Qubit Error Correction. These advancements in qubit error correction are enabling researchers to develop practical, reliable, and scalable quantum computers.

The most notable advancements include the development of new error-correction algorithms. The new error-correction algorithms can detect and correct errors that the old algorithm could not, thereby improving the system’s overall stability. Larger, more complex quantum computations are possible as a result of this advancement.

Classical error correction algorithms have also been used by quantum researchers. Researchers modified classical error-correction algorithms to address the quantum-specific challenges they face. As a result, the researchers developed significantly more robust error-correction frameworks that better enable qubit stability during operation.

Researchers are also making advancements in quantum hardware that will enable the development of error-correction algorithms. Improved qubit connectivity and longer coherence times will enable researchers to efficiently utilise their new error-correction algorithms.

Recent developments in error correction have included:

• Improvement of surface codes – Improving surface codes to improve their efficiency, and to keep the amount of error propagation as small as possible.

• Development of topological codes – Development of new topological codes by developing novel methods of creating and utilising topological quantum states.

• Machine Learning Approaches – Development of machine learning algorithms which can predict and automatically correct errors when they occur.

by MARIOLA GROBELSKA (https://unsplash.com/@mariolagr)

Several new materials and technologies have been researched to improve the ability to correct errors. Researchers are developing new superconductors that can be made into qubits with greater stability and fewer errors, so that error correction should result in fewer errors and thus increase quantum fault tolerance.

Interdisciplinary research collaborations have also dramatically advanced the field of quantum error correction. By collaborating to develop new quantum error correction techniques, researchers from different disciplines such as physics, computer science, and engineering have gained a better understanding of the needs of quantum systems and have therefore created more comprehensive solutions to these challenges.

Researchers are making rapid advances in quantum error correction, but many challenges remain. A major effort is underway to identify new methods to reduce the overhead of error correction. The goal of reducing overhead is to create an efficient error-correction method that uses minimal resources (time or memory).

It is very promising to see the momentum in qubit error correction, and it is reasonable to believe that future advances will provide researchers with additional capabilities in their quantum computers. As error-correction methods become more capable, they will be crucial to providing the foundation for the practical implementation of quantum computing.

Qubit Error Rates vs Required Qubits

| Metric | Value |

|---|---|

| Physical Qubits Needed per Logical Qubit | 1000+ |

| Current Error Rate | 1% |

| Target Error Rate | <0.001% |

| Logical Qubit Stability | Improving rapidly |

| Scalability | Extremely high |

Key Insight: Thousands of physical qubits are needed to create one stable logical qubit.

Source:

- Google Quantum AI Research

https://quantumai.google - IBM Quantum Roadmap

https://www.ibm.com/quantum

Integrating Error Correction into Quantum Hardware

Hardware-based error correction in quantum computing is a significant advancement with the potential to reliably maintain qubit operation in the presence of environmentally induced errors. It is essential that hardware-based error correction be used with all practical applications of quantum computers to enable reliable operation of the device over years or decades.

The biggest problem in seamlessly implementing ECC with current qubit architectures is maintaining qubit coherence during error correction. Creating these types of hybrid devices will require sophisticated designs and high-precision engineering.

Researchers are developing both hardware and circuitry capable of providing real-time error correction for qubits, improving overall computing accuracy and efficiency.

Hardware approaches to implementing error correction in quantum devices:

• Scalability (as more qubits are used)

• Real-time processing

• Compatible with existing systems (will not have an adverse effect on overall system performance).

by Growtika (https://unsplash.com/@growtika)

Hardware error correction for quantum computing can also be beneficial for the future development of long-lived qubits. New materials are being studied that could enable additional control over minimising decoherence, thereby improving the longevity of quantum states. The combination of the characteristics of the new materials and those of error correction could ultimately lead to even better quantum devices.

Quantum computing is likely to greatly benefit from hardware error correction. Hardware error correction will be required to create fault-tolerant quantum computing.

Quantum Stability: Quantum stability ensures qubits remain coherent during complex operations

Quantum Stability is about how well a Quantum Computer can keep a set of Qubits coherent throughout the use of a set of Quantum Gates, Measurements and interactions with each other. Coherence is required to produce Superposition and Entanglement to create the Interference needed by most Quantum Computing Algorithms. Randomness and Non-Determinism result from the loss of coherence.

Qubits are very delicate due to the fact that they react to just about everything surrounding them; Temperature fluctuations, stray Electric and Magnetic Fields, misshapen pulses of control signals, Defects present within the Material(s) used to build the qubits, Interactions between adjacent qubits and Radiation.

It gets progressively more difficult to keep Qubits in a Quantum State as the number of Qubits in a Circuit increases (i.e. As Circuits become “Deeper”). It is relatively easy to keep a single qubit in a Quantum State for an extended period. Maintaining Coherence at Scale (e.g., across thousands to tens-of-thousands of Quantum Gate Operations), however, is considerably more difficult.

Quantum Stability maintains qubit coherence through long quantum circuits.

Stability of quantum systems is generally assessed using several measures, such as the coherence time (or relaxation time), the dephasing time, the fidelity of quantum gate application, the fidelity of system measurement, and the long-term stability of the calibration methods used on the system.

To achieve optimal values for these various measures, it will be necessary to improve the technological level in multiple areas at once, namely, reduce the number of defects in the material and in the manufacturing process, reduce thermal noise and stray electric magnetic field in the cryogenic environment, develop more complex shielding and filtering methods, increase precision in the control of the microwave signal or laser, and optimize the calibration methods so that they can take into account the slow drift in the characteristics of the system components.

Quantum stability is the real-world capability to maintain qubit coherence in a Quantum machine across all gates, measurements, and interactions on the device. Coherence in a qubit enables superposition and entanglement, producing the interference patterns required by algorithms. Once the coherence in a qubit has been lost due to some form of disturbance, the results of computations using it will be noisy and unreliable.

The major challenge facing researchers is that qubits are highly sensitive to environmental disturbances. These disturbances may include small changes in temperature, small changes in external magnetic fields, small errors in control pulses, physical defects in the materials used for the qubits, crosstalk between qubits in a circuit, and even random radiation events.

Quantum stability maintains qubit coherence for long circuits.

Typically, engineers use several parameters to assess the stability of a qubit, which include: the qubit’s relaxation time (how fast the energy contained within a qubit decays), the qubit’s dephasing time (how quickly the phase information stored within a qubit is distorted), gate fidelity, measurement fidelity and calibration stability over time.

Improvements in all of these areas will require advancement at all levels of the stack. This includes: new and improved materials and manufacturing methods to reduce defects, a cleaner environment (cryogenically) to reduce noise, less interference from electromagnetic noise through the use of shielding and filters, improved precision and accuracy of microwave or laser control systems and improved calibration algorithms that can account for the very slow drift of the calibration settings over time.

Quantum Resilience: Quantum resilience strengthens systems against noise and decoherence

The goal of Quantum Resilience is to assess how well a quantum device can maintain reliable output in the presence of an uncontrolled environment and poor-quality equipment. A qubit (quantum bit), as it interacts with the thermal environment, stray electromagnetic fields, and microscopic imperfections in the physical structure of the hardware, causes errors. Examples of such errors are energy relaxation, phase drift, leakage into an incorrect state, and crosstalk between neighbouring qubits. Individual qubit errors can quickly add up when scaling to larger numbers, degrading what was once a clean interference pattern into random outputs.

Quantum Resilience is focused on minimising the impact of errors on the output.

Quantum Resilience enables the production of reliable data from devices that would otherwise be subject to the degrading effects of long term noise.

Quantum Resilience is not a singular characteristic. Rather, it is an approach that spans all levels. At the hardware level, this includes improving material quality, employing shielding and filtering, ensuring steady cryogenic operation, and improving designs to reduce sensitivity to environmental variability. At the control level, this includes calibration, stabilising phase drift, pulse shaping to reduce errors caused by control signals, and real-time monitoring to correct for deviations.

At the software level, this includes compilation techniques to reduce circuit complexity, scheduling to avoid utilising noisy qubits, and error mitigation to identify and eliminate bias introduced by noisy measurements until complete correction is possible.

Quantum Resilience is all about ensuring that you can reliably scale quantum computing. Reliability means you can continue to deliver good performance, over time. The longer-running workloads and higher component count compared to demonstrations increase the potential for things to go wrong. A resilient system maintains a stable level of performance across repeated executions of a workload (days/weeks) and consistently performs well as the number of processors/resources increases.

Furthermore, a resilient system enables consistent benchmarks and predictable scaling. Both of these are required to measure the engineering improvements you have made and to establish confidence with your users.

In addition to enabling reliable execution and predictable scaling, a resilient system would also allow continuous operation and recovery from failures.

Quantum Error Correction enables fault-tolerant quantum computing by encoding logical qubits with many physical qubits and repeatedly measuring syndromes to identify errors while preserving quantum information.

While short- and mid-term extensions to the operating lives of today’s noisy quantum computers are likely achievable through a combination of improved hardware and active error-mitigation strategies, long-term reliability is ultimately likely to come from the fault tolerance provided by QEC.

When the physical error rate is below a certain threshold, the logical error rate can be reduced significantly; thus, computations of extremely large depth can be run reliably. Therefore, as we move toward reliable quantum services, we observe that our concept of quantum resilience evolves from “making the most effective use of noisy hardware” to “keeping noise from affecting the quantum computation.”

Thus, quantum resilience will serve as the link between unreliable qubits and reliable quantum services. Ultimately, by developing stable hardware, operating under control, and applying robust coding techniques, we can maintain the continued accuracy of quantum systems despite decoherence and noise inherent to all physical devices.

Quantum Resilience and Stability: Ensuring Long-Term Quantum Information Integrity

Quantum Resilience refers to how well a quantum system continues to function if it fails, as well as how well it can maintain its data over time. Both quantum resilience and quantum stability help determine whether a quantum-based computer will work efficiently and correctly.

Errors in quantum systems can arise either from interaction with the environment or from internal disagreement within the system itself. If errors are left uncorrected, they can result in computational failure. Therefore, achieving quantum resilience requires developing robust, reliable methods for correcting quantum errors that account for the unique characteristics of quantum systems.

Thus, while quantum stability addresses the correction of transient (temporary) errors that result in the loss of quantum information, it also protects against cumulative errors that occur over the course of a longer computation. Therefore, quantum stability is important for the development of practical quantum computing applications that require long operational lifetimes.

Methods for Increasing Stability in Quantum Systems & Enhancing Resilience:

• Quantum Error Correction Codes: By developing new quantum error correction codes, researchers can develop new methods of detecting & correcting a large number of different types of errors that occur on a quantum computer.

• Improving Coherence Times: The coherence time is how long a qubit retains its information. The better we can extend the coherence time, the better we will be at improving qubit performance and maintaining information over longer periods.

• Minimising Interference by Isolating Quantum Systems: Interference from environmental factors like temperature, electromagnetic fields, etc., causes an increase in error rates. Therefore, reducing or eliminating this type of interference by isolating quantum systems from their environment is one method to reduce error rates.

by Steve Johnson (https://unsplash.com/@steve_j)

New research is underway to improve the above technologies, which will eventually enable reliable quantum machines. The continued development of error correction techniques will make the long-term integrity of quantum information more achievable than ever before. This will enable technological developments previously impossible.

Fault-Tolerant Quantum Computing: Building Reliable Quantum Machines

A fault-tolerant method for quantum computing is required to produce reliable results during computation. A quantum computer’s ability to run an algorithm with some level of accuracy will depend upon how fault-tolerant it is and, therefore, how well the system can correct for errors. Fault tolerance builds upon error correction by enabling the production of systems that can both operate effectively and endure over time.

Two of the most common methods for creating fault-tolerant systems are redundancy and error detection. In the case of redundancy, creating at least one redundant version of every qubit and of all operations that may produce errors allows the system to remain operational even if a fault occurs. Systems utilising redundancy can detect errors early and thereby prevent them from affecting the final outcome.

A number of methods exist to create fault tolerance in quantum systems, including;

• Error detection coding by embedding error-detecting properties into “error-detecting codes” to detect when an error occurs and allow for correction.

• Logical Qubit Encoding to encode a single bit (or qubit) of data onto two or more qubits. This encoding is designed to make it difficult to corrupt data even if an error occurs.

• Controlling how error propagation is allowed to occur throughout the entire quantum circuit so that the damage caused by an error does not spread throughout the circuit.

by Logan Voss (https://unsplash.com/@loganvoss)

Redundancy is also used in the development of quantum circuits for fault-tolerant systems. Since no quantum bit can be guaranteed free from errors, most developers aim to optimise the number of interactions between different quantum bits to increase the efficiency of the quantum circuit. Logical qubits are also used to make redundancy.

A logical qubit is made up of many physical qubits. This design provides a “safety net” for errors. If an error happens, it will not totally destroy all of the information held by the logical qubit. Since there are many physical qubits with the same information, the chances that all the physical qubits will have errors at the same time are very small. Therefore, the physical qubits will serve as a backup for the logical qubits, helping to ensure reliable results.

Another significant element in developing fault-tolerant quantum systems is the “quantum error threshold.” The quantum error threshold defines the maximum acceptable error rate for a quantum system (processor or gate) before it can no longer perform computations. To illustrate this concept, assume a 5-qubit quantum system with a failure probability of 10% per qubit for a given computation.

As long as the probability of two or more qubits failing at the same time is lower than a certain threshold, which depends upon the nature of the computation being executed, the 5-qubit quantum processor should still be able to provide correct answers. If the probability of two or more qubits failing at the same time is higher than this threshold, the processor either could not produce the expected answer or could produce an incorrect answer. Therefore, keeping errors below the quantum error threshold is necessary to ensure the reliability of the processor’s answers.

In addition, the development of fault-tolerant quantum computers relies on collaboration among physicists, engineers, and computer scientists. It is through their cooperative effort that new technologies and novel solutions are developed to create more reliable and efficient quantum processors.

Fault-Tolerant Quantum Computing Workflow

| Step | Process |

|---|---|

| Encoding | Logical qubits encoded across many physical qubits |

| Detection | Errors identified without collapsing state |

| Correction | Errors corrected using redundancy |

| Computation | Operations peformed reliably |

| Verification | Output validated |

Example: A fault-tolerant system continuously corrects errors while running complex algorithms like shor’s algorithm.

Source:

- Nature Quantum Computing

https://www.nature.com - IBM Quantum Research

https://quantum.ibm.com

Fault-Tolerant Quantum: Fault-tolerant quantum systems continue operating accurately despite errors

A fault-tolerant system is one in which we see a transition from the experimental, faulty quantum computers of today to the actual large-scale systems of tomorrow. The objective of Fault Tolerance in Quantum Computing is to perform quantum computation accurately when there is an intermittent failure in an individual qubit, gate or measurement. In practice, failures arise from decoherence, control errors, crosstalk, leakage, and readout noise, and they accumulate rapidly as circuit depth increases. An architecture for fault-tolerant quantum computing is designed such that small faults cannot grow into the wrong final answer.

The primary method of achieving fault tolerance is through Quantum Error Correction. This involves encoding a single logical qubit onto many physical qubits and utilising parity checks and syndrome measurements to identify the likely error pattern without measuring the quantum state. The syndrome measurements are repeated, and the software determines the most likely sequence of errors. The most likely sequence of errors can be used to apply corrections or updates. Quantum Error Correction was necessary because, if it were not available, long quantum computations would have been ruined by noise at sizes large enough to solve problems.

The fault tolerance threshold is also an important concept. When the rate of physical errors falls below a certain threshold, adding additional qubits (quantum bits) that make up the quantum computer results in a significant reduction in the rate of logical errors. For this reason, there is a need to link hardware progress and progress in developing Quantum Error Correction (QEC). Lower error rates in qubits mean less overhead in terms of hardware requirements for quantum computers. Conversely, improved techniques and algorithms for reducing logical errors in QEC enable more logical operations to be performed in quantum computers built from similar hardware.

The moment the pieces fall into place, it’s possible that Fault-Tolerant Quantum Computers could reliably perform tens of millions, or possibly even billions, of logical operations.

There are several road maps that identify Quantum Error Correction, using surface code type error correction, as the approach to Quantum Error Correction, as the surface code method uses local interactions, and thus could be applied on a wide range of hardware platforms.

The cost of using surface-code-type error correction scales. In order to have a Fault-Tolerant Quantum Computer during the initial stages of the development of the technology, the first generation of devices will likely require a large number of physical qubits to make a single logical qubit, along with the ability to quickly measure the states of the qubits; also required will be the ability to efficiently classically decode the measurement results, as well as tightly integrate control logic.

However, once the logical qubits have been made reliable by Quantum Error Correction, then more advanced quantum algorithms will be available for use. This includes quantum algorithms used to simulate the behaviour of chemical compounds, discover new materials, and create cryptographic protocols.

Therefore, Fault-Tolerant Quantum capability is not merely one aspect of a Quantum Computer but rather an engineering stack of components (hardware quality, control-logic stability, encoding, decoding, and verification). As progress continues toward improved Quantum Error Correction and reduced physical error rates, Fault-Tolerant Quantum Computers will evolve from demonstrative devices showcasing the potential of Quantum Computing to dependable computing devices. Therefore, Quantum Error Correction will provide the “reliability layer” necessary for developing scalable quantum computers.

Quantum Algorithms: Quantum algorithms leverage superposition and entanglement for faster problem-solving

Quantum Algorithms utilise Superposition and Entanglement to achieve speed.

A classical program executes a single path. On the other hand, a quantum algorithm operates simultaneously on a superposition of paths and uses interference from correct solutions and cancellation of incorrect ones. Entangled Qubits allow one to perform an operation on the joint state space of multiple Qubits using fewer physical resources than a classical computer would require.

This is not the universal “faster computer” but rather a new model of computing that will outperform classical models on specific tasks.

There are several well-known examples demonstrating why Quantum Algorithms Matter. Shor’s Algorithm can factor large integers faster than any current classical method; this is why Quantum Computing has been significant to modern cryptography. Grover’s Algorithm achieves a quadratic speedup for unstructured search and could be utilised to accelerate subroutines in larger workflows.

In science and engineering, Quantum Simulation Algorithms have the greatest potential because molecules and materials are quantum systems; accurately representing them becomes intractable as complexity increases on classical computers.

In practice, realising these concepts as actual advantages depends on both hardware realities and algorithm design. Many quantum algorithms require deep circuits with thousands to billions of operations and high-precision gates to maintain the interference patterns that carry the solution. The reality of today’s noisy devices presents a tension, as decoherence and gate errors tend to accumulate rapidly. Therefore, near-term approaches typically involve utilising short circuits, hybrid quantum-classical loops, and problem-specific heuristics that can tolerate some level of noise.

Quantum Error Correction preserves the reliability of long computations. By encoding a logical qubit across many physical qubits and repeatedly measuring syndromes, quantum error correction can identify and repair possible errors before the stored quantum information collapses. It is the reliability layer that enables the deepest and most powerful quantum algorithms to run at useful scales, thereby translating elegant theoretical constructs into dependable, repeatable computation.

Quantum Algorithms and Error Correction: Interplay and Optimisation

Quantum algorithms are a key component of quantum computing — they solve problems that classical computers cannot. The main reason for this is the error-correction mechanisms that allow them to run accurately.

Ensuring error-free operation of quantum algorithms through error correction allows them to run without errors and preserve quantum information throughout the computation.

To optimise the interoperability between quantum algorithms and error correction codes, it is necessary to optimise both. The interoperability optimisation process involves developing strategies to enhance both the computational efficiency of quantum algorithms and their ability to operate under suboptimal conditions (error resilience), while minimising data loss.

The development of practical quantum applications will depend on optimising the trade-off between computational efficiency and error resilience.

Optimising Quantum Algorithms using Error Correction:

• Compatibility: Quantum algorithms should be able to run on top of error correction codes such as surface codes.

• Resource Management: Qubits can serve as resources to either perform computations or implement error correction.

• Optimisation: There is an optimisation problem in finding algorithms that take the least amount of time, while keeping the desired level of precision.

There will be a partnership between quantum computing research breakthroughs and the relationship between quantum algorithms and error correction. This partnership will equip researchers with the skills and knowledge needed to develop practical quantum computing applications. Ultimately, there will be a partnership between quantum algorithms and error correction, leading to the realisation of the ultimate goal of quantum computing: solving complex, real-world problems.

Challenges and Limitations in Current Quantum Error Correction Approaches

There are also numerous technical limitations with quantum error correction. Another major problem for quantum error correction is the implementation of error-correction codes. Implementing these types of codes requires access to a large number of physical qubits, and while this is typically easy to do in theory, realising them at the technological level is very difficult.

Another major technological limitation is that all quantum gate operations, as part of all quantum error correction algorithms, are inherently imperfect, with fidelity always less than unity. Therefore, errors may be introduced into the system, thereby reducing the overall efficiency of the quantum error-correction protocol. Therefore, obtaining reliable (i.e., high-fidelity) quantum operations remains a significant hurdle for quantum error correction.

Another major challenge is maintaining coherence among the many qubits in a large-scale quantum computer. The larger the number of qubits within a system, the greater the chance for an increase in error. This means that the more difficult it will be to correct those errors.

Challenges that are a significant limitation of current quantum computing approaches include:

• Resources: A large number of qubits is needed to correct errors reliably.

• Fidelity of gates: Errors from imperfect quantum gate operations are introduced into the system.

• Scalability: Maintaining coherence as the number of qubits increases.

These issues can be resolved through continued advancements in both quantum technology and error-correction methods. To achieve reliable, scalable quantum computing systems, it will be important to make progress in these areas.

The Road to Scalable Quantum Machines: Future Directions and Research Frontiers

Beginning with the exploration of other means to further improve the performance of current quantum systems, and others to find better methods to perform error correction effectively. One of the best methods researchers have discovered to improve their ability to perform quantum computing is to develop more efficient error correction.

The use of more efficient error-correction codes in quantum computing will enable researchers to reduce the number of qubits required for quantum error correction. By doing so, researchers will be able to significantly reduce the resources they need to build large-scale quantum computers. As such, the more efficient error-correction codes that researchers are currently developing will allow them to build much larger, more scalable quantum computers in future generations.

In addition to developing more efficient error-correction codes for quantum computing, researchers continue to improve the integration of quantum hardware. Researchers believe that by improving the ability to include error correction as part of the design of quantum hardware will improve its functionality and reliability. In addition, by including error correction in the design of quantum hardware, researchers may be able to develop more functional quantum devices.

Quantum computer researchers are currently working on the next generation of large-scale quantum systems, as well as numerous initiatives to further develop quantum machines. Research is underway to develop more efficient methods for correcting errors in quantum computers.

The area of error correction has seen significant advances in creating better, more efficient error-correction codes. The new error-correction codes will require fewer qubits to be utilised in the error-correction process. Therefore, with the use of new error correction codes, fewer resources will be needed to operate large-scale quantum computers. The development of more efficient error correction codes will greatly increase the scalability of the next generation of quantum computers.

Advancements in researchers’ ability to integrate quantum hardware have been significant. The ability to incorporate error correction into the design of quantum hardware may improve its performance. Eventually, incorporating error correction into the design of quantum hardware may lead to more usable and reliable quantum devices.

by Rick Rothenberg (https://unsplash.com/@rick_rothenberg)

Conclusion: The Reliable Future of Quantum Error Correction

We may see significant movement towards reliable quantum error correction. As technology continues to advance, improved quantum error correction is likely to significantly enhance the reliability of future quantum computers. Therefore, we believe that reliable quantum error correction will be critical to realising the potential of quantum computing.

The greatest progress has occurred in developing quantum error-correction coding techniques and integrating them with hardware. These developments have contributed to both the overall efficiency of quantum systems and their reliability. As such, the developments in quantum error correction will provide both scalability and robustness to quantum systems.

We also believe that researchers are highly enthusiastic about the development of reliable quantum computing. Researchers are continuing to develop ways to overcome the current hurdles to achieving “quantum supremacy.” Further development of quantum error correction will be crucial to advancing quantum technologies. The development of quantum error correction represents a major milestone in laying the groundwork for the next generation of computing technologies.

FAQs

- What is quantum error correction (QEC)?

Quantum error correction protects quantum information by encoding one logical qubit across multiple physical qubits and performing syndrome-based error correction, correcting errors without measuring the data itself. - Why do quantum computers need error correction?

Because of decoherence and noise, qubits are highly susceptible to information loss. If no corrections are made, the number of errors will increase dramatically over time, making longer calculations unreliable. - What types of errors happen in quantum systems?

There are several common error types, such as the bit flip (bit flip error), phase flip (phase flip error), and combined (depolarising) error. The most common causes of these errors are environmental influences and imperfectly executed operations. - How is quantum error correction different from classical error correction?

Unlike classical correction, where we can directly read the bits of our data, quantum correction cannot create clones of an unknown state and therefore must measure the logical qubit indirectly via syndrome measurements. - What is a “logical qubit” vs. a “physical qubit”?

A physical qubit represents the actual piece of hardware, while a logical qubit represents a single piece of information encoded over multiple pieces of hardware (physical qubits) to produce an extremely reliable representation of that information. - What are syndrome measurements, and why are they important?

Measurements of syndrome values have characteristics similar to those of parity checks to determine if there was an error and where it most likely occurred, so that the encoded quantum state is preserved during corrections. - What are surface codes, and why are they popular?

Quantum Error Correction (QEC) surface codes are 2D grids that consist of Quantum Error Correction (QEC) codes that employ local interactions to detect errors; thus, they are practical for many possible hardware configurations and, in theory, are scalable. - What does “fault-tolerant quantum computing” mean?

This is the ability to run quantum algorithms accurately using QEC, together with architectures that prevent errors from spreading out of control. - What are the biggest current challenges in QEC?

There are several significant barriers to implementing this: high qubit overhead, imperfect gate and measurement fidelities which can introduce new errors, and the loss of coherence as the number of qubits increases. - What breakthroughs could make scalable quantum machines feasible sooner?

Fewer overhead codes (less overhead), faster and more accurate decoding, better qubit coherence and connectivity, and a closer integration at the hardware level of the real-time control of the system and the error correction.