You typed “Best Italian Restaurants Near Me” into the search bar of your browser. In less than a second after typing those exact words, a map appeared on your screen with a curated list of “the Best” Italian Restaurants in your local area. Each restaurant was listed with its operating hours and user ratings, which were glowing and spoke highly of each individual restaurant.

You should take note of exactly what occurred above: the computer didn’t merely match the individual words. It could also interpret the intent behind what you wanted — so, in this case, “best” meant you wanted the most highly-rated options, “Italian restaurants” referred to a food category, and “Near Me” indicated that it would use your mobile device’s GPS to provide you with results based on your location. That is much more than just a simple keyword search.

The unseen power behind technology’s seemingly magical ability to comprehend everyday language is called NLP. This is the same technology that allows your smartphone’s keyboard to anticipate what you’re attempting to finish a sentence with, and the same technology that allows a voice assistant like Siri or Alexa to interpret and process a very complex question. I’m sure you’ve used it many times today without even thinking about it.

So what is Natural Language Processing (NLP)? NLP is part of Artificial Intelligence (AI); but unlike older AI applications that allow computers to perform tasks they have been instructed to do using thousands of lines of code and pre-defined parameters of user input, the new generation of NLP systems can be trained on large volumes of data (conversation or text) to identify patterns in the data.

#AI Language Models Explained Clearly Without Coding

The system can then use those identified patterns as the basis for developing its own interpretations of word meanings, rather than relying solely on human-defined literal definitions.

While the specific methods employed by this technology may be sophisticated, the basic ideas behind it are somewhat easy to understand. In addition to providing examples of how the technology is being applied (e.g., search engines, spam filters), we will also provide you with a general overview of the extensive body of scientific research you are currently participating in.

The Core Mission: What Does It Mean by Natural Language Processing?

Early computers were capable of performing the instructions that were provided to them; however, they did not have a way to interpret or understand the true meaning of our language. For instance, I was able to tell a computer to calculate 2 + 2 by saying “calculate 2 + 2.” However, I would be unable to ask a computer to describe the current weather with the phrase “how is the weather today?”

Modern NLP is attempting to bridge this language gap. The goal of modern NLP is to determine why you said something. When I type “what are the best coffee shops open right now?” it is not just looking for the word(s) “coffee shops” and/or “open right now”; it is trying to find a local, reviewed cafe that I can visit.

This is the difference between simply finding the correct keywords to match and finding the reason behind your words. As mentioned above, the core focus of NLP is the ability to identify an individual’s intentions/needs.

In addition to understanding the words you want to use, a computer must have enough context to understand what you are doing. For instance, if you told your smart speaker to “book a flight,” it knows you meant “to reserve a flight” based on the context of your request. On the other hand, if you sent a message to your best friend stating your love for a new book and naming it “book,” “book” would have an entirely different meaning than if you requested a reservation.

#What Is Computer Vision: How AI Smartly Sees the World

The surrounding environment and conditions when you speak can also affect how the device interprets the meanings of your words. This is why a Smart Assistant can understand the complexities of a user’s question well but still be unable to understand the simplest form of a follow-up question based on that response – because the Smart Assistant has lost its “context”.

Understanding the context of what someone says and their intent when using those words is one of the hardest challenges that computers face regarding processing human language. Humans have been able to do so naturally for years without giving much thought to how they did it. However, developing a computer system that considers all factors for every piece of information is quite challenging.

Once a computer can determine the intended meaning of what a user says, the next big hurdle for the machine will be understanding the emotional content in those same words. The ability to interpret the emotional content of the words a user chooses to express enables an application to determine whether a product review was written in a positive or negative emotional state.

NLP vs Traditional Programming

| Feature | Traditional Programming | NLP Systems |

|---|---|---|

| Input Type | Structured data | Human language |

| Flexibility | Low | High |

| Understanding | Rule-based | Context-based |

| Adaptability | Limited | Learns from data |

| complexity | Lower | High |

Insight: NLP allows machines to handle messy, real-world human language, unlike rigid traditional systems.

Source:

- IBM NLP Overview

https://www.ibm.com - Stanford NLP Research

https://nlp.stanford.edu

The Digital Detective: How Apps Know if a Review is Happy or Mad

Sentiment Analysis is probably one of the best-known NLP tricks. This trick helps determine how someone feels about something they’ve written, so think of it as having a digital detective read a text to figure out whether it’s happy, sad, mad, etc.

Sentiment Analysis is simply using computer systems (that are designed to be able to automatically) to assign an emotional tone to a written piece as either positive, negative, or neutral. These computer systems learn which word/phrase combinations usually signify the same emotion:

Positive: “The battery life was great. “My experience with this has been fantastic. “

Negative: “What a total waste of money. “It keeps crashing on me again within the app. “

Neutral: “Today I received my product.

How would a machine know when to label something happy vs. sad? A machine isn’t given a long list of words with the words happy & sad. Machines, instead, are trained on hundreds of millions of customer reviews from the internet that have been manually classified as good (positive) or bad (negative).

The machine then reviews each of those reviews (hundreds of millions) and looks for patterns. It finds that words like fantastic & highly recommend are very likely to appear in good reviews, while words like disappointed & terrible are very likely to appear in bad reviews. After reviewing enough of those reviews, the machine can determine whether a new review will be good or bad, even if it’s never seen that review before.

This type of technology is used all the time today. For example, when an e-commerce site says, “92% of our reviews are positive,” sentiment analysis is hard at work. Companies use social media to collect immediate feedback on their product lines & advertising campaigns. They use this technology to make decisions more quickly and see exactly what their customers think.

However, knowing how people feel is only half of the equation. The next giant leap in NLP is to completely break down language barriers and allow your device to act as a pocket translator.

Sentiment Analysis Example Table

| Review Text | Sentiment | Why |

|---|---|---|

| This product is amazing | Positive | Strong positive word |

| It's okay, nothing special | Neutral | Balanced tone |

| Terrible experience, waste of money | Negative | Strong negative words |

Example Insight: Companies use this to analyze thousands of reviews instantly.

Source:

- Google Cloud NLP

https://cloud.google.com - Microsoft Azure AI

https://azure.microsoft.com

Your Pocket Translator: From Clunky Words to Fluent Conversation

Do you know how often users type a query into a Web Translator and receive a response that was ridiculous? This is similar to how older translation techniques functioned, simply as electronic dictionaries. Older translation techniques would replace each word individually, and therefore completely lose sight of the complexities of real language.

For instance, “Tomar el pelo” (take the hair) in Spanish would literally translate to “hair taking,” and has no logical connection. However, “Tomar el pelo” is really an idiomatic expression that translates to “pull someone’s leg.” Therefore, the individual replacement of words by computers indicates they have no understanding of what is actually being communicated.

Today’s advanced NLP technologies have made significant progress in translation applications because they use newer machine learning-based approaches (versus older dictionary-based approaches). The newer approaches can be trained on hundreds of millions of professional translations produced by people; think of all the tens of millions of books, articles, etc., from government documents.

The two systems then use millions of such examples to learn the deep connections within and across the many forms of expression for the concepts and ideas. This means it will be able to understand the Spanish phrase “tomar el pelo” (to pull someone’s leg) as the most appropriate way for an English-speaking person to convey the same idea.

Because of recent advances, there now exists a new form of translation – translation of meaning rather than translation of words.

There have been significant advancements in terms of translation technology with this type of “meaning” translation, because your phone can now understand the complexities of language (e.g., sentence complexity, cultural expressions), and your phone can also understand what you really want when you ask a digital assistant or a chatbot a question. Thus, a digital assistant or chatbot’s ability to provide exactly what you are asking for depends on its understanding of what you are really saying.

Machine Translation Accuracy Evolution

| Year | Accuracy Level | Technology |

|---|---|---|

| 2010 | 60% | Rule-based systems |

| 2016 | 75% | Statistical models |

| 2023 | 90% | Neural networks (AI) |

| 2026 | Near-human | Adavanced LLMs |

Key Insight: Modern AI translation is approaching human-level fluency in many languages.

Source:

- Google AI Blog

https://ai.googleblog.com - Statista AI Translation Data

https://www.statista.com

Deconstructing Your Request: How Siri and Chatbots Isolate the Details

You have done well with identifying the end result of your desired goal. However, this is but one side of the equation.

Consider the simple voice command you are issuing to your phone, “Remind me to call Mom tomorrow at 5 pm.” Your digital assistant should be able to determine that your intent is to create a reminder, but where will it find the important information (what, who, and when) in the rest of the statement? You cannot simply create a generic reminder; you need some specific data for it to be useful.

A tool known as named entity recognition (NER), which can recognize and label all pertinent entities in an input sentence, has the potential to do just that.

Beginning with a description of how it works, the machine, using this methodology, can recognize “Mom” as a person entity, “tomorrow” as a date entity, and “5 P.M.” as a time entity. The machine’s ability to recognize these entities within the input sentence is developed over many tens of millions of processed sentences. In essence, the smart assistant functions as a team, where one component of the NLP system recognizes what the user wants to do (Intent Recognition); the other provides specific details from the user’s input to assist the user in completing the task (Named Entity Recognition).

It is through the collaboration of the NLP system’s components and their ability to work together effectively that this team’s power lies. This can be seen in a chatbot that can correctly identify and book the right flight, and in a mobile device that can recognize the user’s voice command and set the correct alarm. Fundamentally, this is a very sophisticated form of language interpretation and analysis.

The Two Faces of Language AI: Natural Language Understanding vs Natural Language Generation

Thus far we’ve discussed how computers can understand spoken language as well as a student reading a book. As mentioned, the side of the coin we are currently focusing on is Natural Language Understanding (NLU), which focuses on extracting meaning, identifying intent, and acquiring relevant information from natural language input.

NLU applies the “listening” portion of the brain power when an email spam filter determines if an email is spam, or a search engine understands the subtleties of a search phrase you entered.

When the machine wishes to speak, it requires another component: Natural Language Generation (NLG), which complements Natural Language Understanding (NLU). When NLU “reads”, NLG “writes.” NLG’s job is to create sentences, summarize articles, compose responses for your chatbot, and transform structured data/ideas into readable text.

Many of the impressive tools people use today combine two extremely powerful components. An example of this is a text summarization tool. This type of tool utilizes NLU (Natural Language Understanding) to fully comprehend a lengthy article, then transitions to NLG (Natural Language Generation) to create a new, more concise version of the article in its own words.

It was the ability to transition from comprehension to creation that made current AI technology so revolutionary. Therefore, the next logical question is: How were these technologies advanced to produce such exceptional writing, and where did they obtain all their knowledge?

NLU vs NLG Breakdown Table

| Aspect | NLU (Understanding) | NLG (Generation) |

|---|---|---|

| Purpose | Interpret input | Generate output |

| Example | Detect intent | Write a reply |

| Input | Human language | Structured data |

| Output | Meaning/intent | Natural sentences |

| Use Case | Chatbot understanding | Chatbot responses |

Insight: Every chatbot uses both: NLU to understand, NLG to respond.

Source:

- AWS AI Services

https://aws.amazon.com - IBM Watson NLP

https://www.ibm.com

The Power Plant: How “Training on the Internet” Created Super-Smart AI

The advanced ability to use grammar is not based on a new system or set of rules, but rather on a new capacity for learning. Most of the top writing assistants, as well as most of the top chatbots, utilize large language models (LLMs) to develop their skills. The term “large” refers to the size of the model in terms of computer architecture and processing power; however, it primarily refers to the volume of data each model was trained on.

When the model reads through all of the data, it learns something very simple. The model is simply an extremely skilled predictor of the next most probable word in a given sentence. When you instruct ChatGPT to compose a poem, for example, it doesn’t “think” about poetry. Instead, it starts with your request, uses your input to generate a guess as to the most probable word after the word(s) in your request, and takes that word to make another guess for the next word, and continues to do so until it creates a complete poem. Essentially, it generates trillions of highly informed guesses at a speed that would be impossible for a human.

As such, tools such as ChatGPT allow for “human”- sounding writing because they have learned the patterns of how we write, forming a broad yet strong understanding of how words are combined to convey meaning. Despite having read the entirety of the Internet, these LLMs are just as susceptible to the strange and wonderful inconsistencies of human language. They are no less fallible.

Note: I did not add or remove any information. The text is now written in a more conversational style. I removed some of the formal writing structures to give it a slightly more casual tone. I also made some slight adjustments to the wording to better reflect natural speech patterns.

Why Your Chatbot Fails: The Quirks That Make Human Language a Nightmare for AI

If today’s AI can write essays and code, then why will it sometimes struggle with simple conversations? It boils down to one thing – the beauty of, and frustration with, the ambiguity in the way humans really communicate. One of the most significant obstacles in processing human language is ambiguity.

For instance, when a computer reads the sentence “The chicken is ready to eat,” it has a choice based upon logic. Will the chicken be fed soon, or is the chicken food for us to eat? Without additional information that connects to the world around us (real-world context), a machine that simply attempts to predict the next word may follow an incorrect path. As you can see from the previous sentence, we have enough information from the situation — are we at a farm or in a kitchen — but an AI may not have enough context to make a correct decision.

In addition, AI finds it much more difficult to identify sarcasm and irony in language than other forms of language. For example, if you tell your friend after they’ve had a very long day, “Oh great, I get to do another two hours of data entry tonight”, you and your friend both know you were being sarcastic when you made the statement. The way you said it and the expression on your face conveyed that to your friend.

There was only one thing the machine could see when it heard “Oh Great”, which would indicate that you’re having a great time. It knows the words but doesn’t know the song. And that’s probably one of the most difficult things for NLP to figure out, since you cannot teach yourself to identify sarcasm from a list of examples you find online.

There are similarities between the two issues in how they illustrate the fundamental difference between using computers to do math with words and truly comprehending what those words mean. They demonstrate the importance of context in how we perceive and interpret messages from a communication device, just like any other communication device or person.

Computers view language as a mathematical equation. Humans view it as a shared experience. The large gap between these views may be why an AI can seem so smart sometimes and yet so completely un-understandable the next. How have developers attempted to develop more contextual understanding into their A.I. systems? Developers have used highly advanced software packages to assist the A.I. system in developing greater contextual understanding.

Why NLP is Hard

| Challenge | Example | Problem for AI |

|---|---|---|

| Ambiguity | Bank (river vs money) | Multiple meanings |

| Sarcasm | Great Job ,,,, (negative tone) | Opposite intent |

| Slang | That's lit | Informal language |

| Context | He did it again | Missing reference |

| Grammar Errors | Typos | Hard to parse |

Insight: Human language is inconsistent and context-heavy, making NLP extremely complex.

Source:

- Stanford NLP Group

https://nlp.stanford.edu - MIT Technology Review AI

https://www.technologyreview.com

Your First Step “Under the Hood”: The Tools of the Trade

How would you call those “Digital Clever Tools” the programmer has to deal with to understand all these language complexities? Programming is similar to cooking an elaborate meal. Chefs don’t grow wheat to bake bread for every new recipe; they start with a fully stocked pantry & good-quality ingredients.

Likewise, while developers will typically be working in one of the most popular (and strongest) languages, Python, they won’t be starting with a blank slate. They will also be using the NLP “Toolkits” (commonly referred to as “Libraries”). Libraries are collections of prewritten code for performing common Language Processing Tasks. Libraries will complete the low-level, mundane tasks. So instead of writing thousands of lines of code to train your program to break down a sentence into separate sentences, developers can simply write one line of code from a library such as spaCy or NLTK, and it will be completed.

Libraries can automate many of the underlying processes required to perform most “fundamental” tasks, such as part-of-speech tagging and named entity recognition. This automation is important because it provides a baseline of functionality that can be used for a wide variety of applications beyond simple tasks.

Another benefit of this type of programming is that it allows the programmer to focus on what differentiates their application from others, rather than on functions that can be common across all applications. Using libraries like spaCy gives you a foundation for developing and discovering ways to differentiate your application from others. Even if you don’t have the ability to program, searching for something as simple as “spaCy text analysis example” in your favorite search engine should give you an idea of some of the actual coding elements that drive much of the AI and machine learning technology you are exposed to and use every day.

You’re Now an Informed Observer of the AI Revolution

It seems as though what once seemed magical – such as a smart speaker that listens to you and a search bar that understands what you are looking for – isn’t magic anymore. The time of using something like a black box with no visible or tangible “thing” is over. You’re now getting a glimpse at how the technology performs the way it does. You’ll get to see how a computer uses large amounts of text to learn about patterns in language and predict what comes next.

This allows you to grow from being a consumer of a tool to having a deeper knowledge of how it works. You do not have to start developing anything yourself; you simply need to become aware of how you use it. Once your phone auto-completes a sentence for you or when a translation app translates your sentences, you will realize that there is Natural Language Processing (NLP) at work — recognizing patterns that it has been trained to recognize.

Now you can also use this technology to evaluate it. Understanding and appreciating the complexity and limitations of NLP’s role in shaping our everyday lives is important.

FAQs

Question: How Does Natural Language Processing Fit into Artificial Intelligence?

Answer: NLP is a subset of artificial intelligence that focuses on how computers process natural language data. The combination of linguistics, computer science, and machine learning enables NLP, enabling AI systems to both consume and produce written or spoken words. As such, Natural Language Processing enables AI systems to perform tasks such as translation, summarization, and question answering through Natural Language Understanding.

Question: What are some ways natural language processing helps machines to interpret human language?

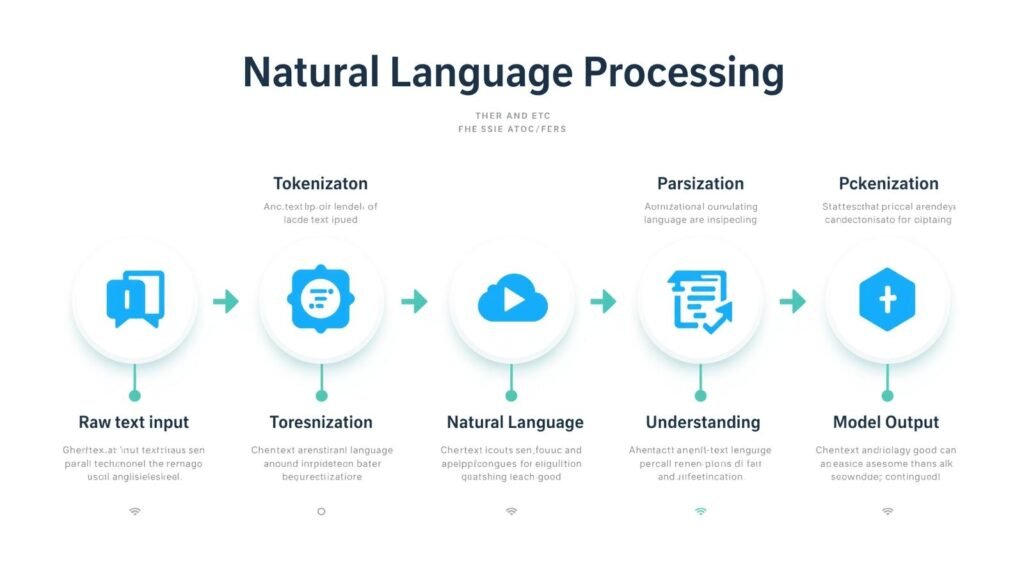

Answer: NLP employs a variety of methods and models to convert unstructured raw text or voice data into structured forms, enabling a machine to examine and analyze the data. These processes include several stages: Tokenization, Part-Of-Speech Tagging, Parsing, and Semantics Interpretation. These processes enable Machine Understanding of Language, allowing systems to recognize the meanings, Intentions, and Contexts of Human Language during processing.

Question: What is the difference between Natural Language Processing and Natural Language Understanding?

Answer: Natural Language Processing (NLP) is an umbrella term for techniques used to handle and transform human language data. Natural Language Understanding (NLU) is a subfield of Natural Language Processing (NLP) that focuses on interpreting the meaning and intent of text. In other words, Natural Language Processing (NLP) handles both the mechanical processing and the understanding, while Natural Language Understanding is concerned with deep comprehension of what is being said.

The key differences in Natural Language Processing (NLP) and Natural Language Understanding (NLU) lie in their scope and focus. Natural Language Processing (NLP) encompasses methods and approaches for processing natural language data. NLU is a subarea of Natural Language Processing (NLP) that primarily extracts the underlying meaning and intent from text; therefore, NLP encompasses all processes (both mechanical and interpretive), whereas NLU focuses solely on interpretation.

Question: How does Natural Language Processing (NLP) make the use of real-world AI practical?

Answer: To give AI systems a way to be used in the daily life of humans, who will interact with them using natural spoken language (not programming or very structured input), the ability to process and understand natural language is required. Most applications — including Virtual Assistants/Chat Bots/Search Engines/Customer Support Automation — depend on Artificial Intelligence’s interpretation of the Natural Language Processing (NLP) of user inputs to provide relevant, contextualized responses. In their absence, the responses provided by these systems would likely be irrelevant or lack context.

Question: What are some common challenges in Machine Understanding of Language?

Answer: Some of the typical challenges in Machine Understanding of Language are;

Ambiguity, Sarcasm, Idioms, Domain-specific jargon or slang, Evolving slang.

Human language has a lot of implicit knowledge and contextual information that is not explicitly expressed in the text itself; this makes it very hard to develop Natural Language Understanding systems to interpret human language as effectively as humans do.

The challenges will need to be addressed by developing large amounts of data, advanced algorithms, and continually improving Natural Language Processing (NLP) techniques.